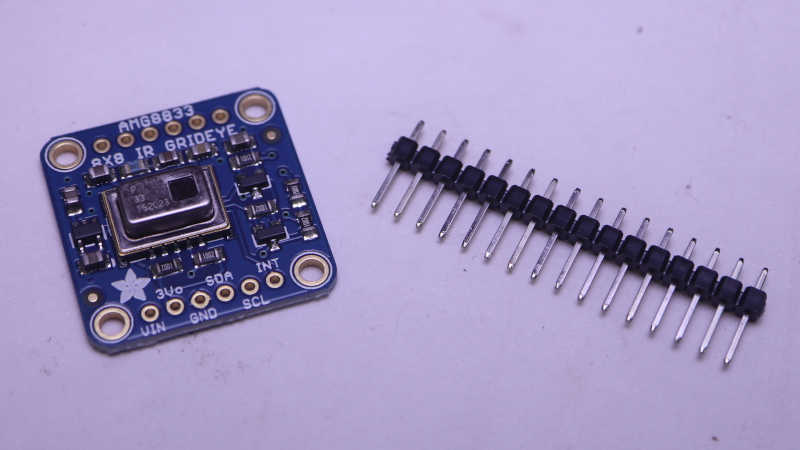

I’ve successfully overlaid data from a AMG8833 thermal sensor on top of the Adafruit Memento camera viewfinder, turning it into a thermal camera. A very slow and sluggish thermal camera! Because my first draft was not written with performance in mind. To speed things up, I converted my thermal overlay to use TileGrid and take advantage of the compositing engine in Adafruit’s displayio library. In theory that should have been faster, but my attempt was not and I didn’t know how to debug it. I went looking for another approach and found MicroPython/CircuitPython has ported a subset of the powerful Python NumPy library as ulab.numpy. And furthermore, there was an example of using this library to interpolate AGM8833 8×8 data to a 15×15 grid in Adafruit learning guide Improved AMG8833 PyGamer Thermal Camera. Ah, this will do nicely.

Add Performance Timers

The first thing I got from that project is a reminder of an old lesson: I need to record timestamps during my processing so I know which part is slow. Otherwise I’m left with vague things like “TileGrid didn’t seem much faster”. I added several lines of code that recorded time.monotonic_ns() and a single line at the end of my loop that print() delta between those timestamps. Since the units are nanoseconds and these are slow operations, I get some very large numbers that were unwieldy to read. Instead of dividing these numbers by 1000, I right-shifted them by 10 bits to result in a division by 1024. The difference between “roughly microseconds” and “exactly microseconds” is not important right now and, in the spirit of performance, should be much faster.

Measure TileGrid Implementation

Here’s are four frames from my TileGrid implementation:

read 38087 scaled 3099 mapped 1789 grid 1728 blit 28223 refresh 360370 total 433296

read 37789 scaled 3099 mapped 1759 grid 1758 blit 30190 refresh 359803 total 434398

read 38713 scaled 3129 mapped 1788 grid 1729 blit 29683 refresh 362098 total 437140

read 38296 scaled 3129 mapped 1758 grid 1759 blit 29146 refresh 360579 total 434667With a total of ~434ms per loop, this is just a bit over two frames per second. Here’s the breakdown on what those numbers meant:

- “read” is time consumed by reading 8×8 sensor data from AMG8833 sensor. This ~38ms is out of my control and unavoidable. It must occur for basic functionality of this thermal camera.

- “scaled” is the time spent normalizing 8×8 sensor data points between the maximum and minimum values read on this pass. This ~3ms is my code and I can try to improve it.

- “mapped” is the time spent translating normalized 8×8 sensor data into an index into my thermal color palette. This ~1.7ms is my code and I’m surprised it’s over half of “scaled” when it does far less work. Perhaps ~1.7ms is how long it takes CircuitPython to run through “

for y in range(8): for x in range(8):” by itself no matter what else I do. - “grid” is the time spent updating TileGrid indices to point to the color indices calculated in “mapped”. Since it’s basically the same as “mapped” I now know updating TileGrid indices do not immediately trigger any bitmap processing.

- “blit” copied OV5640 sensor data into a bitmap for compositing. This ~30ms is out of my control and unavoidable. It must occur for basic functionality of this thermal camera.

- “refresh” is where most of the time was spent. A massive ~360ms triggered by a single line of my code. This included pulling bitmap tiles based on TileGrid indices, rendering them to the TileGrid, compositing thermal overlay TileGrid on top of the OV5640 bitmap TileGrid, and finally send all of that out to the LCD.

Back to Bitmap

I don’t know why my TileGrid compositing consumed so much time. I’m probably doing something silly that crippled performance but I don’t know what it might be. And when it’s all triggered by a single line of my code, I don’t know how to break it down further. I will have to try something else.

https://github.com/Roger-random/circuitpython_tests/commit/1a62d8adbbeecf9d05ad79ff239906367fbfb440