When I started playing with computers, audio output was primitive and there were no means of audio input at all. Voice controlled computers were pure science fiction. When the Sound Blaster gave my computer a voice, it also enabled primitive voice recognition. The mechanics were primitive and the recognition poor, but the promise was there.

Voice recognition capabilities have improved in the years since. Phone-operated systems have enabled voice controlled menus within in the past decade or so. (“For balance and payment information, press or say 4.”) It is now considered easy to recognize words in a specific domain.

Just within the past few years, advances in neural networks (“deep learning”) have enabled tremendous leaps in recognition accuracy. No longer constrained to differentiating numbers, like navigating a voice menu, the voice commands can be general enough to be interesting. And so now we have digital assistants like Apple’s Siri, Google’s Assistant, Amazon’s Alexa, and Microsoft’s Cortana.

But when I tried to communicate with them, I still feel frustrated by the experience. The problems are rarely technological now – the recognition rate is pretty good. But there was something else. I had been phrasing it as “low-bandwidth communication” but I just read an article from Wired that offers a much better explanation: These voice-controlled robots are designed to be chatty.

The problem has moved from one of technical implementation (“how do we recognize words”) to one of user experience (“how do we react to words we recognize”) and I do not appreciate the current state of the art at all. The article lays out reasons why designers choose to do this: To make the audio assistants less intimidating to people new to the technology, make them sound like a polite butler instead of an efficient computer. I understand the reason, but I’m eager for the industry to move past this introductory phase. Or at least start offering a “power user” mode.

After all, when I perform a Google search, I don’t type in the query like I would to a person. I don’t type “Hey I’d like to read about neural networks, could you bring up the Wikipedia article, please?” No, I type in “wikipedia neural network”

Voice interaction with a computer should be just as terse and efficient, but we’re not there yet. Even worse, we’re intentionally not there due to user experience design intent, and that just makes me grind my teeth.

Today, if I wanted a voice-controlled light, I have to say something like “Alexa, turn on the hallway lights.”

I look forward to the day when I can call out:

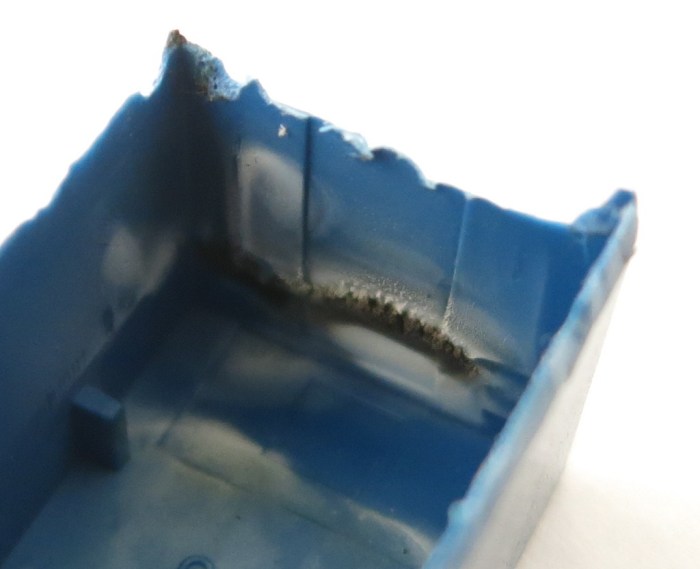

Looking at the inside of the just-removed case, we can see a lot of heat damage. Black char marks the hottest areas, and discolored white marked the rest.

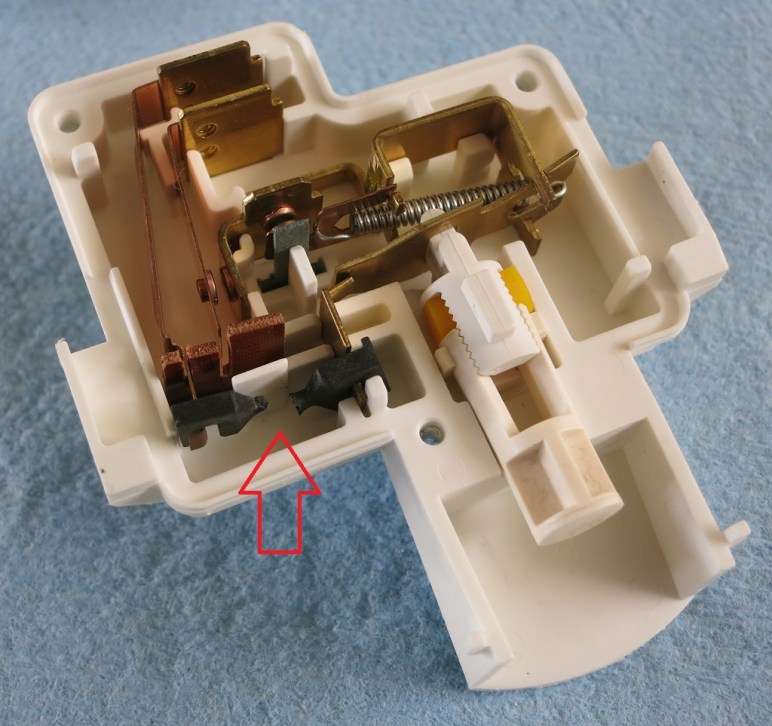

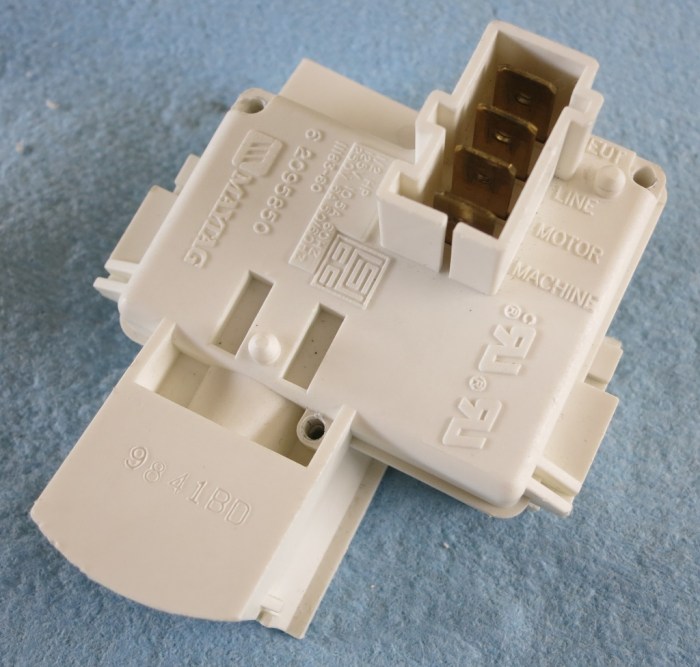

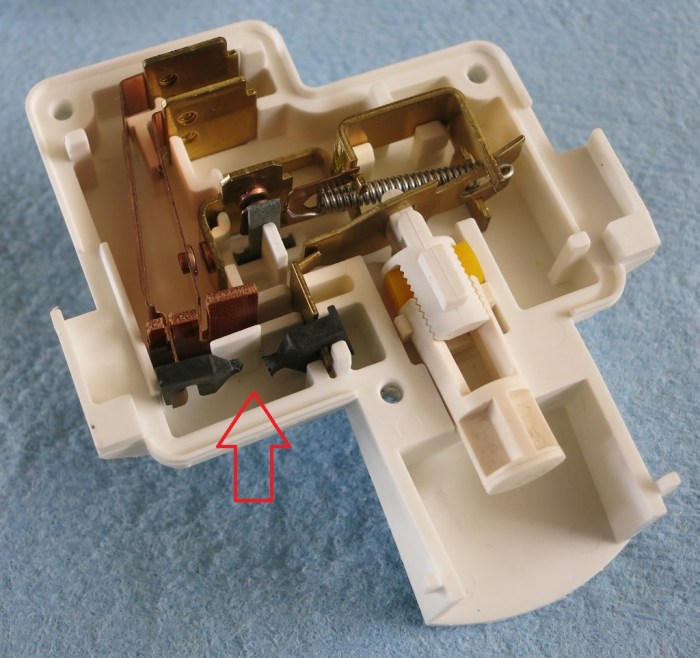

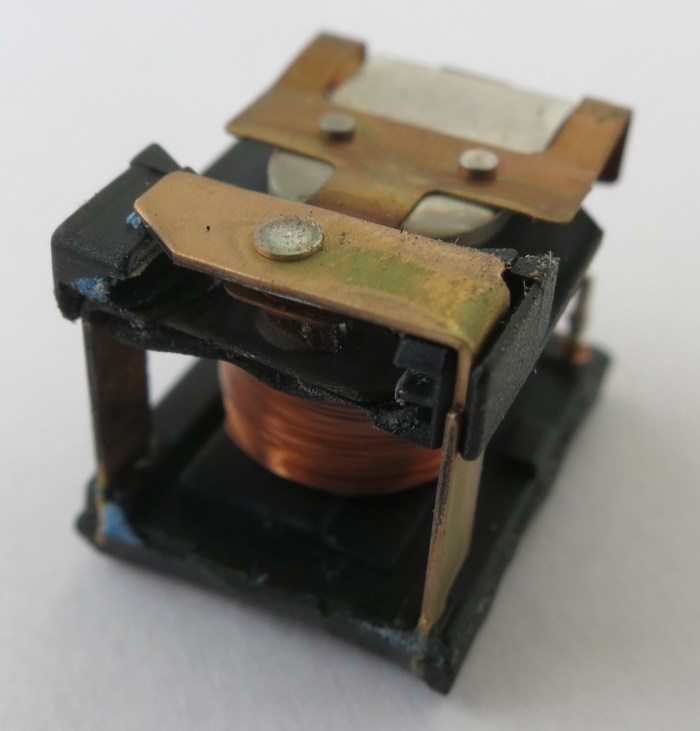

Looking at the inside of the just-removed case, we can see a lot of heat damage. Black char marks the hottest areas, and discolored white marked the rest. It’s a fairly straightforward relay, with the coil actuating an armature moving between contacts on either the plate above or below it. The armature+contact area is immediately behind the blacked charred bits of the case. And looking at the armature and contacts themselves, we see the relay died an unhappy death.

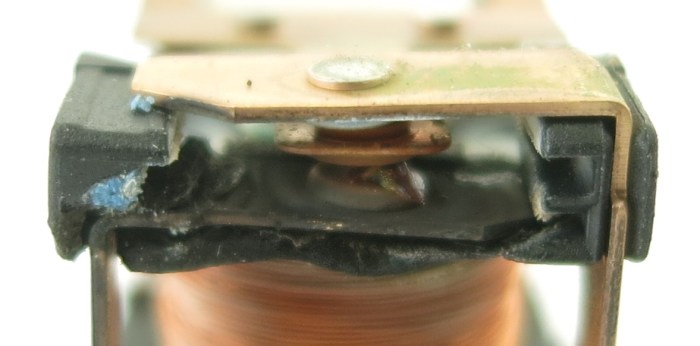

It’s a fairly straightforward relay, with the coil actuating an armature moving between contacts on either the plate above or below it. The armature+contact area is immediately behind the blacked charred bits of the case. And looking at the armature and contacts themselves, we see the relay died an unhappy death. Everything in the contact area is distorted and/or charred. There is a black plastic-feeling piece holding everything in position relative to each other, and it could no longer do its job with heat distorting it and moved things out of alignment. Between the armature and the bottom contact is a blob of melted something that looks vaguely like solder. The bits of blue visible are parts of the blue casing that has melted onto this assembly. While the top contact looks OK in this picture, the side facing the armature is just as blackened and charred as the visible face of the bottom contact. The armature itself is barely visible here but it is actually discolored and distorted near the contacts.

Everything in the contact area is distorted and/or charred. There is a black plastic-feeling piece holding everything in position relative to each other, and it could no longer do its job with heat distorting it and moved things out of alignment. Between the armature and the bottom contact is a blob of melted something that looks vaguely like solder. The bits of blue visible are parts of the blue casing that has melted onto this assembly. While the top contact looks OK in this picture, the side facing the armature is just as blackened and charred as the visible face of the bottom contact. The armature itself is barely visible here but it is actually discolored and distorted near the contacts.

Looking around the perimeter for fasteners, the four rods immediately stood out. They are spread around the perimeter, and almost the entire height of the disposal. I tried the easy thing first but they refused to budge with my flat-head screwdriver. So out came the angle grinder with the cutting wheel, which quickly cut the exposed shaft.

Looking around the perimeter for fasteners, the four rods immediately stood out. They are spread around the perimeter, and almost the entire height of the disposal. I tried the easy thing first but they refused to budge with my flat-head screwdriver. So out came the angle grinder with the cutting wheel, which quickly cut the exposed shaft. Unfortunately that did not allow the top part to come free. Something else was holding it together. Whether it is a mechanism I don’t understand or corrosion I could not tell. But there were no other obvious fasteners to release on the top side, nor is there a convenient point to start prying.

Unfortunately that did not allow the top part to come free. Something else was holding it together. Whether it is a mechanism I don’t understand or corrosion I could not tell. But there were no other obvious fasteners to release on the top side, nor is there a convenient point to start prying. Once the bottom was cut free, I had a better view to find next best place to cut the stator free. When I pulled the stator off, I was very surprised to feel the rotor flex along with the stator because I had expected it to stay with the rest of the grinder.

Once the bottom was cut free, I had a better view to find next best place to cut the stator free. When I pulled the stator off, I was very surprised to feel the rotor flex along with the stator because I had expected it to stay with the rest of the grinder. The source of the problem became clear once the stator came off: the metal plate separating the electrical motor from the grinder has been severely weakened by corrosion. I’m sure there were only a few (or maybe only one) hole when I pulled this from the sink, but the whole plate was corrosion weakened so it fell apart when I pulled the stator off the bottom.

The source of the problem became clear once the stator came off: the metal plate separating the electrical motor from the grinder has been severely weakened by corrosion. I’m sure there were only a few (or maybe only one) hole when I pulled this from the sink, but the whole plate was corrosion weakened so it fell apart when I pulled the stator off the bottom.

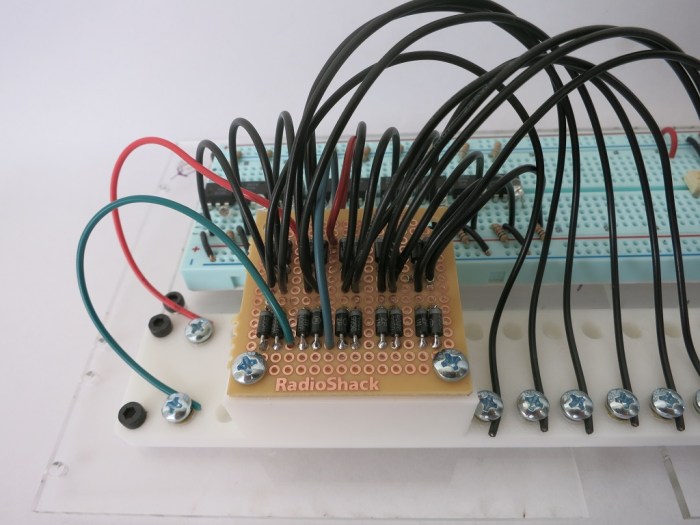

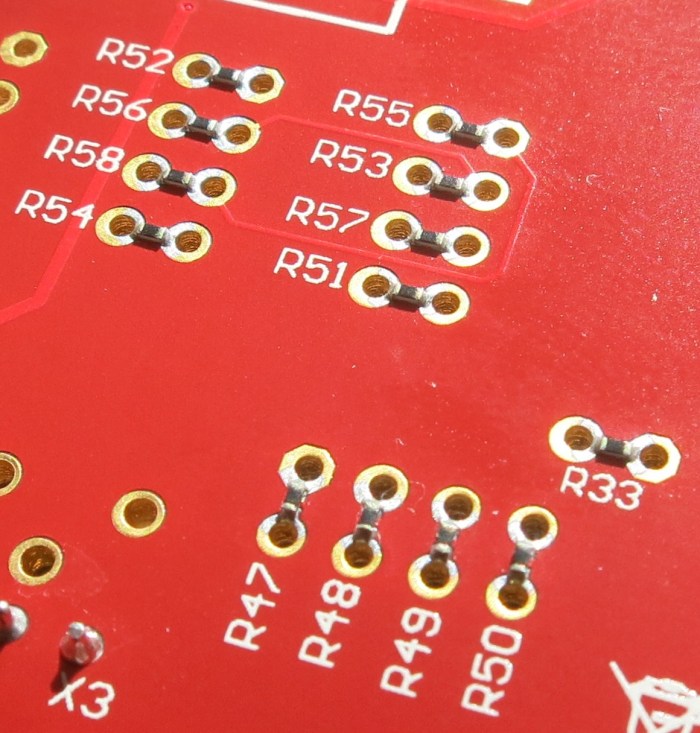

But I was not introduced to them in isolation – I saw them on the Curiosity board and in this context their purpose was immediately obvious: a link between pins on the PIC socket and the peripheral options built on that board. If I wanted to change which pins connected to which peripherals, I would not have to cut traces on the circuit board, I just had to un-solder the zero ohm resistor. Then I can change the connection on the board by soldering to the empty through-holes placed on the PCB for that purpose.

But I was not introduced to them in isolation – I saw them on the Curiosity board and in this context their purpose was immediately obvious: a link between pins on the PIC socket and the peripheral options built on that board. If I wanted to change which pins connected to which peripherals, I would not have to cut traces on the circuit board, I just had to un-solder the zero ohm resistor. Then I can change the connection on the board by soldering to the empty through-holes placed on the PCB for that purpose.