Continuing on this beginner’s exploration of ROS, I got a taste of how a robot can be more intelligent about its movement than the random walk of turtlebot3_drive. It also gave me a taste of how much I still have to learn about how to effectively use all these open source algorithms available through the ROS ecosystem, but seeing these things work is great motivation to put in the time to learn.

There’s an entire chapter in the manual dedicated to navigation. It is focused on real robots but it only needs minimal modification to run in simulation. The first and most obvious step is to launch the “turtle world” simulation environment.

roslaunch turtlebot3_gazebo turtlebot3_world.launch

Then we can launch the navigation module, referencing the map we created earlier.

roslaunch turtlebot3_navigation turtlebot3_navigation.launch map_file:=$HOME/map.yaml

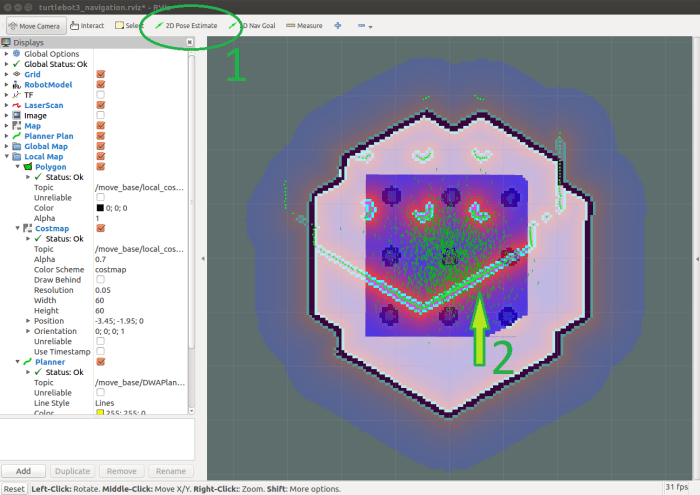

When RVis launches, we see one and a half turtle world. The complete turtle world is the map data, the incomplete turtle world is the laser distance data. We see the two separately because the robot doesn’t yet know where it is, resulting in a gross mismatch between map data and sensor data.

ROS navigation can determine the robot’s position, but it needs a little help with initial position. We provide this help by clicking on “2D Pose Estimate” and drawing an arrow. First we click on the robot’s position on the map, then we drag upwards to point the arrow up representing the direction our robot is facing.

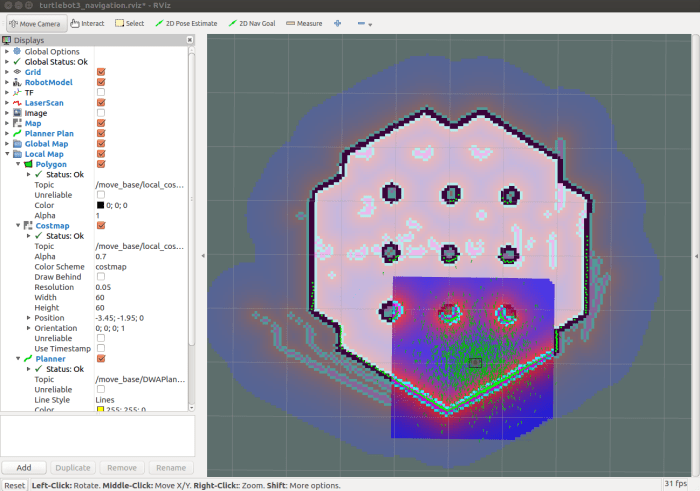

In theory, once the robot knows roughly where it is and which direction it is facing, it can match laser data up to the map data and align itself the rest of the way. In practice it seems like we need to be fairly precise about the initial pose information for things to line up.

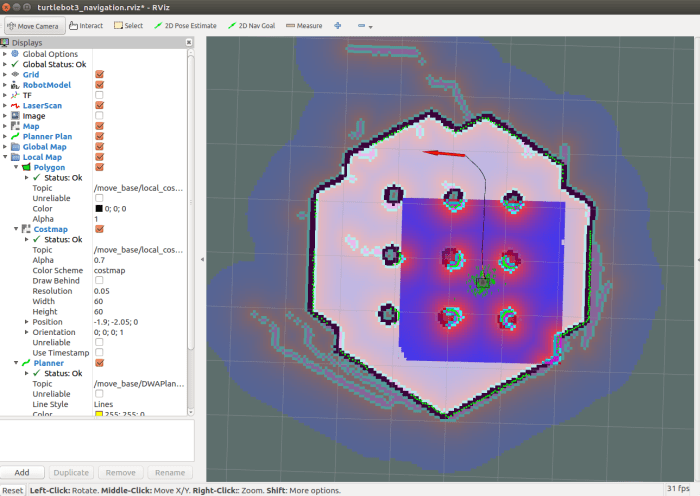

Once aligned, we can click on “2D Nav Goal” to tell our robot navigation routine where to go. The robot will then plan a route and traverse that route, avoiding obstacles along the way. During its travel, the robot will continuously evaluate its current position against the original plan, and adjust as needed.

That was a pretty cool demo!

Of course there’s a lot of information shown on RViz, representing many things I still need to sit down and learn in the future. Such as:

- What are those little green arrows? They’re drawn in RVis under the category named “Amcl Particles” but I don’t know what they mean yet.

- There’s a small square surrounding the robot showing a red-to-blue gradient. The red appears near obstacles and blue indicates no obstacles nearby. The RViz check box corresponding to this data is labelled “Costmap”. I’ll need to learn what “cost” means in this context and how it can be adjusted to suit different navigation goals.

- What causes the robot to deviate off the plan? In the real world I would expect things like wheel slippage to cause a robot to veer off its planned path. I’m not sure if Gazebo helpfully throws in some random wheel slippage to simulate the real world, or if there are other factors at play causing path deviations.

- Sometimes the robot happily traverses the route in reverse, sometimes it performs a three-point-turn or in-place turn before beginning its traversal. I’m curious what dictates the different behaviors.

- And lastly: Why do we have to do mapping and navigation as two separate steps? It was a little disappointing this robot demo separates them, as I had thought state of the art is well past the point where we could do both simultaneously. There’s probably a good reason why this is a hard problem, I just don’t know it yet in my ignorance.

Lots to learn!