Examination of the LCD screen on the Hackaday Belgrade 2018 badge (predecessor to upcoming Hackaday Superconference 2018 badge) continues! Previous post highlighted some important tidbits from Reading The (Fine) Manual on components, now it’s time to get hands-on and get some experimental data. The default “user program” on the badge showcased a small bitmap operation that works one single pixel at a time. This is very inefficient and takes almost 2 seconds to fill the screen. I’m confident the badge can go faster, so let’s test how fast.

Maximum frame rate: ~80fps

Our tool for these tests is tft_fill_area() in disp.c. This function is used to fill a screen area with a single color, such as when clearing the screen. Looking at the code, we see it is sending 320 * 240 pixels of color data using a single fixed value. The fixed value means this is as fast as we’ll ever be able to fill the screen via existing interface configuration. Anything more complex will require computation that takes more time – and slower – than using a single fixed value.

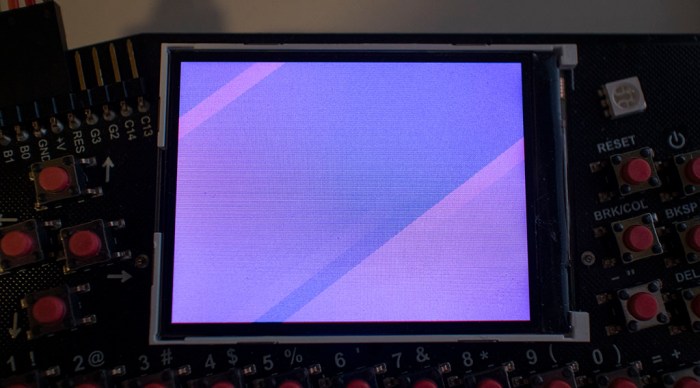

A test program to cycle filling through all the RGB values shows that it takes about 3 seconds to traverse the 256 values of a single byte. 256 / 3 ~= 80 frames per second. Of course, frame rate for real tasks will be slower, but this isn’t bad at all and gives confidence it’ll be fine to stick with existing interface configuration. I’ve also confirmed visual artifacts from updating the screen so quickly without VSYNC. On a screen that’s supposed to be filled with a single color, we see diagonal rendering artifacts.

Render-by-line frame rate: ~30fps

Since we don’t have enough memory for a full screen 32-bit buffer (and we don’t have VSYNC to make good use of it anyway) it’s time to scale down ambitions. Single pixel operations like the default demo program are too slow. So let’s go with a convenient middle ground between per-pixel and per-screen: operate at per-line level. A 32-bit buffer, for a single line of 320 pixels, would take only 1280 bytes (1.25 kilobyte). Well within our memory budget.

The test program will be doing a little bit of work, filling the line buffer with a pattern before sending the line to screen. It’ll also invoke the transmit overhead 240 times (once per line on screen) more than the single-screen operation, so I expected it to be slower. How much slower? It now takes about 8 seconds to run through 256 values of a single byte. 256 / 8 ~= 30 frames per second. This frame rate is more realistic, and still a decent speed for modest projects.

But some people will always push for faster. Has anyone applied that spirit of performance to the badge? Let’s look around the web…