After examining the enclosure of a Roku Premiere model 4620X (“Cooper”) that I took apart, attention turns to the solitary circuit board inside the device. A piece of cast aluminum under its top lid has all the appearance of a heat sink, so the first thing I did was to pop off the thin metal shield to confirm – yep, there’s a microprocessor under there. With a bit of black thermal compound to bridge the small gap between it and the metal shield.

The other metal shield is directly soldered to the board and I couldn’t remove it (nicely) to see what’s inside. So I flipped the board over instead. I see a shield whose shape mirrored the top shield and covers nearly (if not exactly) the same area.

In comparison to its topside counterpart, it has an extra twist in the form of extra metal bridging the center in a “T” pattern. Or maybe a circle with three legs? This must be intentional, but I couldn’t figure out why.

And just for fun, here is the board side-by-side with a Raspberry Pi 4, which has broadly similar specifications of an ARM-powered 4K UHD capable networked device.

Using a cotton swab and some isopropyl alcohol, I cleaned off the thermal compound to read markings on the CPU.

I see two logos: a stylized “M” (MediaTek?) with ARM, and below them the following:

MS09380AMZ-R62-NA1

ATKR524B

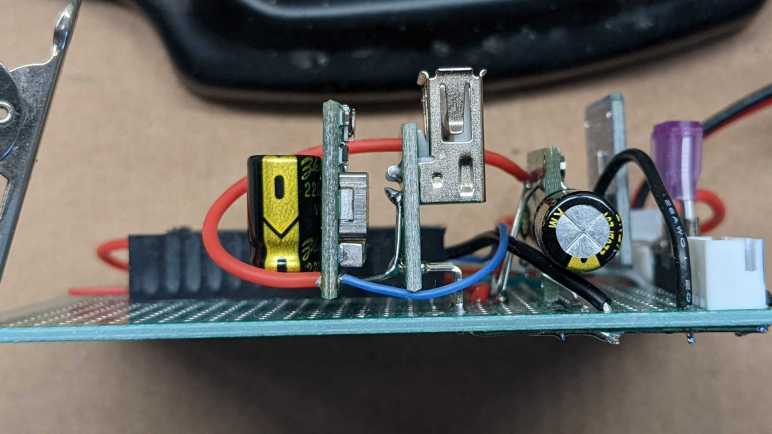

1637BSeveral connectors are visible around the board, not all of which are used. Starting from the upper left, we have the power connector.

There are five soldered points, but all four along the sides are electrically connected to ground. Only the bottom center point is different, picking up 12V from the AC adapter. Below it is a large round contact point (with a bit of solder) is one of two grounding points for the aluminum heat sink.

To the right is an unpopulated connector also with four surrounding ground points but with three unused electrical contacts. I’m not sure what this would be. My first thought was maybe an alternate video connector, but there aren’t enough pins to be any type of DisplayPort. Composite video or S-Video are too old-school for this 4K-capable video streaming box, so I ruled those out. It is too small to be a pair of RCA jacks for analog audio, so my best guess is a provision for TOSLINK audio.

Next is the HDMI connector, with several meticulously routed differential signal pairs visible.

The final connector on this edge is probably an 8P8C connector for wired Ethernet, with an adjacent unpopulated chip footprint that’s likely to relate to wired networking. Their absence means this particular Roku is WiFi-only.

On the left edge of the board is definitely a switch, electronic schematic symbol for switch and everything. Since there’s already a reset switch on board, this is probably used for a development/test/debug purpose.

On the right edge looks like provision for a USB type-A socket. It may be for similar dev/test/debug purposes, but Roku may have also contemplated the option of a USB port so it can play a slide show of pictures on a USB flash drive. This is a feature I see on many other media boxes.

The bottom of the edge has two antennae, and my RF skills aren’t enough to know what to do with them. A status LED is on the right edge, but I have plenty of LEDs and saw nothing particular special about this one. In between the antennae, though, is a receiver for the infrared remote. I’ve come across similar receivers before, and I always thought “that might be fun to play with” but never did… until now.