One of the objectives of OpenAI Gym is to have a common programming interface across all of its different environments. And it certainly looks pretty good at the surface: we reset() the environment, take actions to step() through it, and at some point we get True as a return value for the done flag. Having a common interface allows us to use the same algorithm across multiple environments with minimal modification.

But “minimal” modification is not “zero” modification. Some environments are close enough that no modifications are required, but not all of them. Sometimes an environment is just not the right fit for an algorithm, and sometimes there are important details which differ from one environment to another.

One way environments differ is in different type of spaces. An environment has two: an observation_space that describes the observed state of the environment, and an action_space that outlines valid actions an agent may choose to take. They change from one environment to another because they tend to have different observable properties and different actions an agent can take within them.

As an exercise I thought I’d try to take the simple Q-Learning algorithm demonstrated to solve the Taxi environment, and slam it on top of CartPole just to see what happens. And to do that, I had to take CartPole‘s state which is an array of four floating point numbers and convert it into an integer suitable for an array index.

As an naive approach, I’ll slice up the space into discrete slices. Each of four numbers will be divided into ten bins. Each bin will correspond to a single digit zero to nine, so the four numbers will be composed into a four digit integer value.

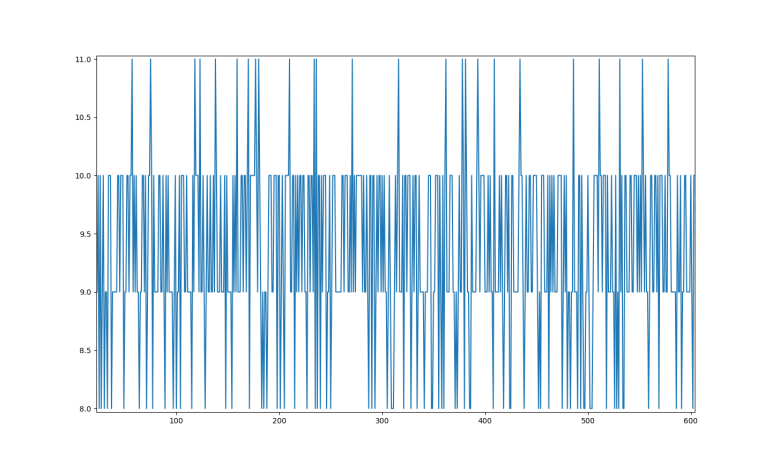

To determine size of these bins, I executed 1000 episodes of the CartPole simulation while taking random actions via action_space.sample(). The ten bins are evenly divided between maximum and minimum values observed values in this sample run, and Q-learning is off and running… doing nothing useful.

As shown in plot above, reward function is always 8, 9, 10, or 11. We never got above or below this range. Also, out of 10000 possible states, only about 50 were ever traversed.

So this first naive attempt didn’t work, but it was a fun experiment. Now the more challenging part: figuring out where it went wrong, and how to fix it.

Code written in this exercise is available here.