Once my Luggable PC Mark I was up and running, I have one more functional desktop-class CPU in my household that has not yet been drafted into my Folding@Home efforts: it was recently put in charge of running FreeNAS. As a network attached storage device, FreeNAS is focused on its main job of maintaining files and serving them on demand. There are FreeNAS plug-ins to add certain features, such as a home Plex server, but there’s no provision for running arbitrary programs on the FreeBSD-based task-specific appliance.

What FreeNAS does have is the ability to act as a host for separate virtual environments that run independently of core FreeNAS capability. This extension capability is a part of why I upgraded my FreeNAS box to more capable hardware. The lighter-weight mechanism is a “jail”, similar in concept to the Linux container (from which Docker was built) but for applications that can run under the FreeBSD operating system. However, Folding@Home has no native FreeBSD clients, so we can’t run it in a jail and have to fall back to plan B: full virtual machine under bhyve. This incurs more overhead as a virtual machine will need its own operating system instead of sharing the underlying FreeBSD infrastructure, consuming hard disk storage and locking away a portion of RAM unusable by FreeNAS.

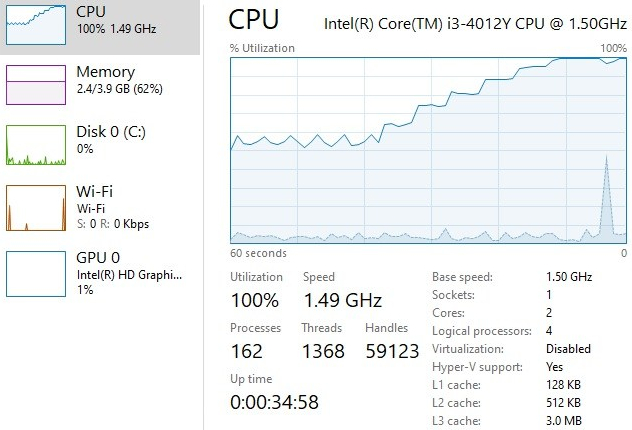

But the overhead wasn’t too bad in this particular application. I installed the lightweight Ubuntu 18 server edition in my VM, and Folding@Home protein folding simulation is not a memory-intensive task. The VM consumed less than 10GB of hard drive space, and only 512MB of memory. In the interest of always reserving some processing power for FreeNAS, I only allocated 2 virtual CPUs to the folding VM. The Intel Core i3-4150 processor has four logical CPUs which are actually 2 physical cores with hyperthreading. Giving the folding simulation VM 2 virtual CPUs should allow it to run at full speed on the two physical CPUs and still leave some margin to keep FreeNAS responsive.

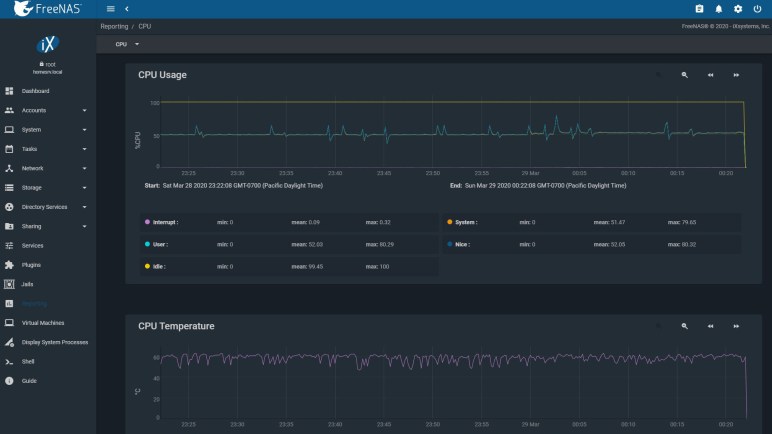

Once the VM was up and running, FreeNAS CPU usage report does show occasional workload pushing it above 50% (2 out of 4 logical CPU) load. CPU temperature also jumped up well above ambient temperature, to 60 degrees C. Since this Core i3 is far less powerful than the Core i5 in Luggable PC Mark I and II, it doesn’t generate as much heat to dissipate. I can hear the fan increased speed to keep temperature at 60 degrees, but the difference is minor relative to the other two.