My Dell Inspiron 7577 is not happy running Proxmox VE. For reason I don’t yet understand, its onboard Ethernet would quit at unpredictable times. [UPDATE: Network connectivity stabilized after installing Proxmox VE kernel update from 6.2.16-15-pve to 6.2.16-18-pve. The hack described in this post is no longer necessary.] Running dmesg to see error messages logged on the system, I searched online and found a few Linux kernel flags to try as potential workarounds. None of them have helped keep the system online. So now I’m falling back to an ugly hack: rebooting the system after it falls offline.

My first session stayed online for 36 hours, so my first attempt at this workaround was to reboot the system once a day in the middle of the night. That wasn’t good enough because it frequently failed much sooner than 24 hours. The worst case I’ve observed so far was about 90 minutes. Unless I wanted to reboot every half hour or something ridiculous, I need to react to system state and not a timer.

In the Proxmox forum thread I read, one of the members said they wrote a script to ping Google at regular intervals and reboot the system if that should fail. I started thinking about doing the same for myself but wanted to narrow down the variables. I don’t want to my machine to reboot if there’s been a network hiccup at a Google datacenter, or my ISP, or even when I’m rebooting my router. This is a local issue and I want to focus my scope locally.

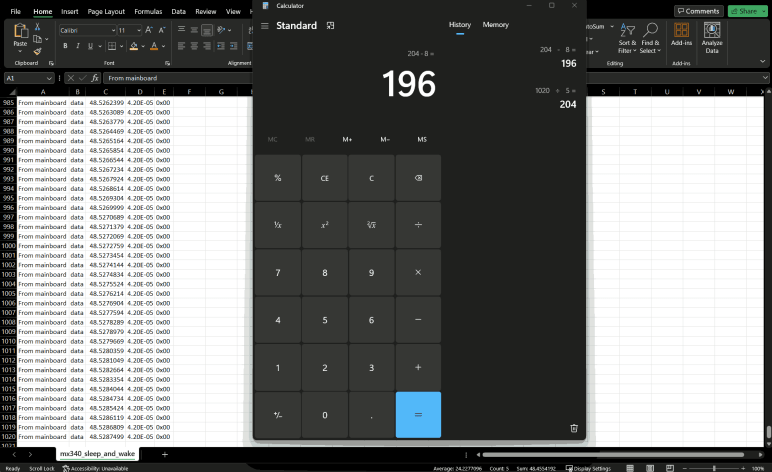

So instead of running ping I decided to base my decision off of what I’ve found so far. I don’t know why the Ethernet networking stack fails, but when it does, I know a network watchdog timer fires and logs message into the system. Reading about this system, I learned it is called a journal and can be accessed and queried using the command line tool journalctl. Reading about its options, I wrote a small shell script I named /root/watch_watchdog.sh:

#!/usr/bin/bash

if /usr/bin/journalctl --boot --grep="NETDEV WATCHDOG"

then

/usr/sbin/reboot

fi

Every executable (bash, journalctl, and reboot) are specified with full paths because I had problems with context of bash scripts executed as cron jobs. My workaround, which I decided was also good security practice, is to fully qualify each binary file.

The --boot parameter restricts the query to the current running system boot, ignoring messages from before the most recent reboot.

The --grep="NETDEV WATCHDOG" parameter looks for the network watchdog error message. I thought to restrict it to exactly the message I saw: "kernel: NETDEV WATCHDOG: enp59s0 (r8169): transmit queue 0 timed out" but using that whole string returned no entries. Maybe the symbols (the colon? the parentheses?) caused a problem. Backing off, I found just "NETDEV" is too broad because there are other networking messages in the log. Just "WATCHDOG" is also too broad given unrelated watchdogs on the system. Using "NETDEV WATCHDOG" is fine so far, but I may need to make it more specific later if that’s still too broad.

The most important part of this is the exit code for journalctl. It would be nonzero if messages are found from the query, and zero if no entries are found. This exit code is used by the "if" statement to decide whether to reboot the system.

Once the shell script file in place and made executable with chmod +x /root/watch_watchdog.sh, I could add it to the cron jobs table by running crontab -e. I started by running this script once an hour on the top of the hour.

0 * * * * /root/watch_watchdog.sh

But then I thought: what’s the downside to running it more frequently? I couldn’t think of anything, so I expanded to running once every five minutes. (I learned the pattern syntax from Crontab guru.) If I learn a reason not to run this so often, I will reduce the frequency.

*/5 * * * * /root/watch_watchdog.sh

This ensured network outages due to Realtek Ethernet issue are no longer than five minutes in length. This is a vast improvement over what I had until now, which is waiting until I noticed the 7577 had dropped off the network (which may take hours), pulling it off the shelf, log in locally, and type “reboot”. Now this script will do it within five minutes of watchdog timer message. It’s a really ugly hack, but it’s something I can do today. Fixing this issue properly requires a lot more knowledge about Realtek network drivers, and that knowledge seemed to be spread across multiple drivers.

Featured image created by Microsoft Bing Image Creator powered by DALL-E 3 with prompt “Cartoon drawing of a black laptop computer showing a crying face on screen and holding a network cable“