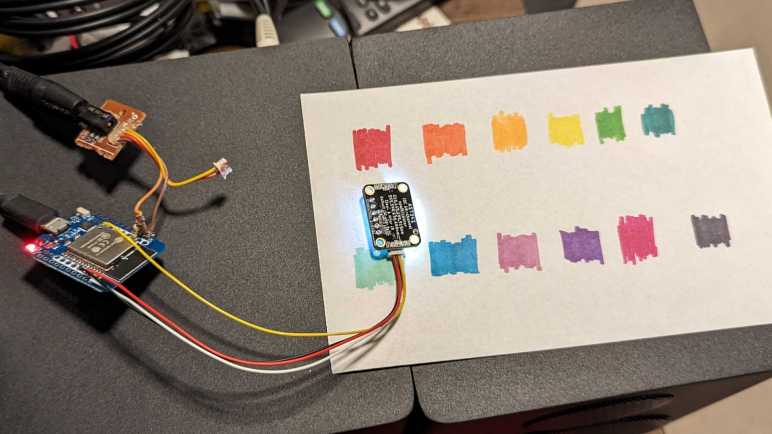

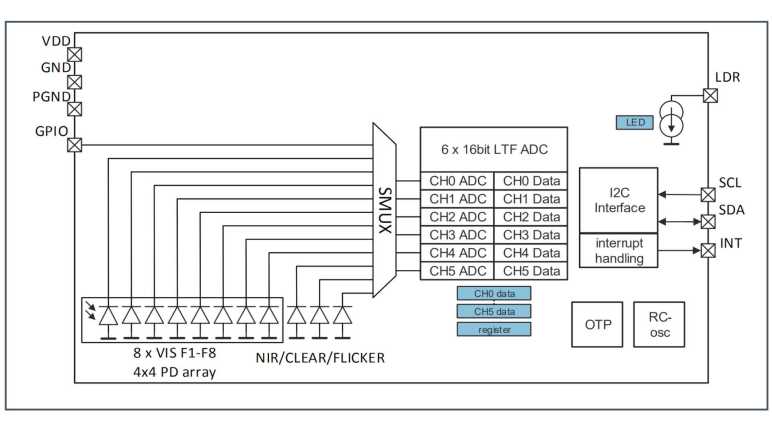

After a helpful comment helped me find documentation on the no-longer-mysterious AS7341 SMUX (sensor multiplexor) I went to learn more about another mystery I stumbled across as a beginner web developer: CORS (cross-origin resource sharing.) Why does CORS policy exist? After a bit of poking around, I believe the answer is to mitigate a type of attack under the umbrella of CSRF (cross-site request forgery.)

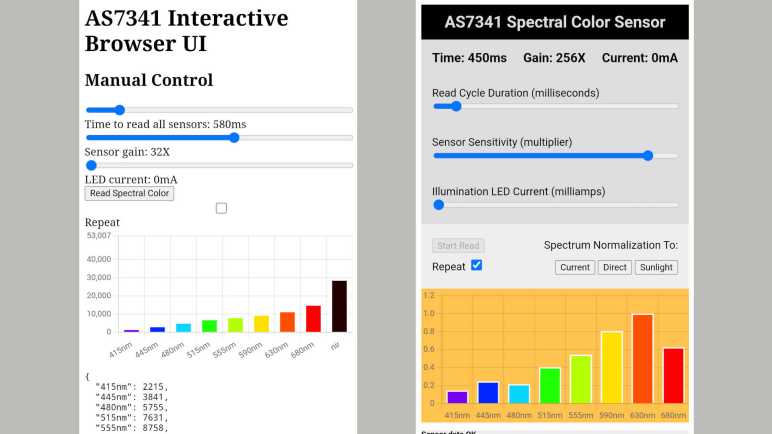

When developing my AS7341 web app, I had the AS7341 accessible via a HTTP GET on my ESP32 and thought I could develop the HTML interface on my desktop machine. But when my desktop-served JavaScript tried to query my ESP32, I was blocked by browser CORS policy. By default, JavaScript served from one server (my desktop) is not allowed to query resources on another (my ESP32.)

Reading various resources online, I learned I could set my ESP32’s HTTP response header “Access-Control-Allow-Origin” to a wildcard “*” to opt out of CORS protection. But that’s merely a “make the error go away” kind of recommendation. I know CORS is security related, but I don’t understand the motivation. What security problem does CORS prevent? Without knowing the motivation, I don’t know what I am opening up by setting “Access-Control-Allow-Origin : *” In my web app, I started out cautiously by only setting that header when I’m developing the HTML UI, serving from my desktop to query my ESP32. In “production”, my ESP32 will serve the HTML and would not need “Access-Control-Allow-Origin : *” in the header to query itself, so that header is absent.

Is that the right thing to do, or is that being overly cautious? I set out to learn more. Curiously, reading MDN and other resources give me information about HOW CORS works, but not a lot about WHY CORS exists. I guess CORS documentation assume the reader already knows! Based on that fact, I know I am looking for a relatively common website security issue that is now considered basic knowledge by network professionals.

Another data point is the fact that CORS is only applicable to HTTP queries from JavaScript running in the browser. From a command line on my desktop, I can use the “curl” tool to query my ESP32 and CORS does nothing to block that. My browser on my desktop can query the endpoint directly and that is not blocked by CORS policy, either.

Things didn’t make much sense until I found a key piece of information: HTTP request sent from a browser’s JavaScript runtime not only sends the URL and its parameters, but the browser would also attach all cookies set by that host. These cookies may contain user authentication (the “Keep me logged in” checkbox) and it makes sense such capability shouldn’t be available to just any piece of JavaScript served by random hosts. Knowing this fact and knowing the kind of abuse such code can cause eventually led me to a category of security attacks known as CSRF (cross-site request forgery.)

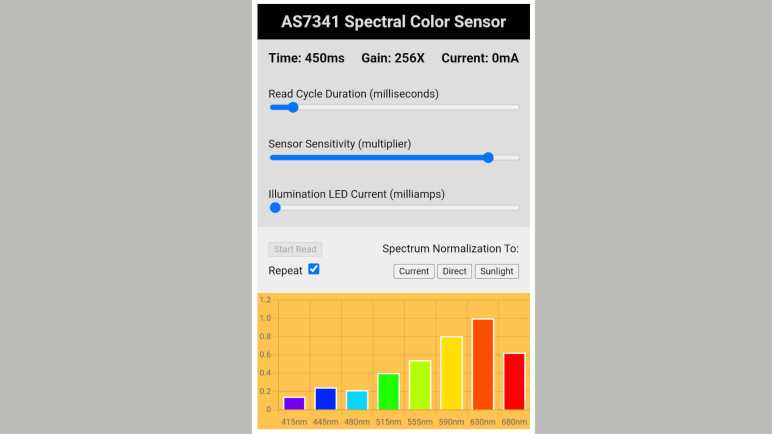

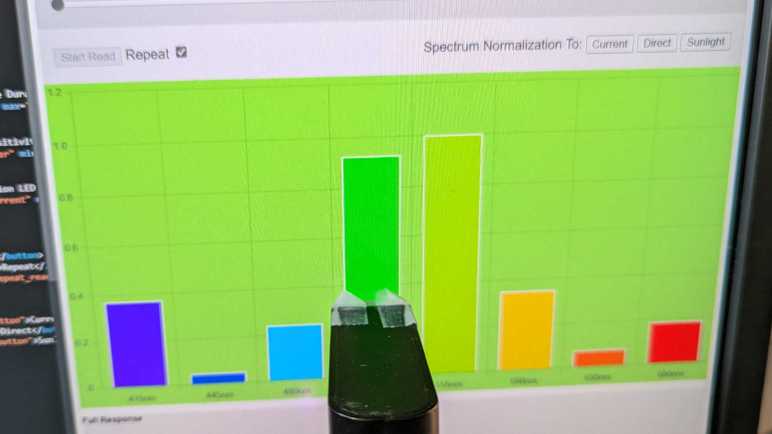

Once I understood CORS is here to mitigate a subset of CSRF attacks, I could look at my ESP32 AS7341 access endpoint and decide CSRF is not a problem here. Setting “Access-Control-Allow-Origin : *” does not open me up to security nastiness, so my ESP32 sketch sets that header all the time now not just during development. This is a handy bit of knowledge, but it merely scratched the surface of web security. Another item I found to be big and intimidating is OAuth.

Code for this project is publicly available on GitHub