For years I’ve been trying to figure out how to do machine vision affordably so I could build autonomous robots. I looked at hacking cheap LIDAR from a Neato robot vacuum. I looked at an old Kinect sensor bar. I looked at Google AIY Vision. I looked at JeVois. I tried to get a grounding in OpenCV. And I was in the middle of getting up to speed on Google ARCore when the OpenCV AI Kit (OAK) Kickstarter launched.

Like most Kickstarters, the product description is written to make it sound like a fantastic dream come true. The difference between this and every other Kickstarter is that it is describing my dream of an affordable robot vision sensor coming true.

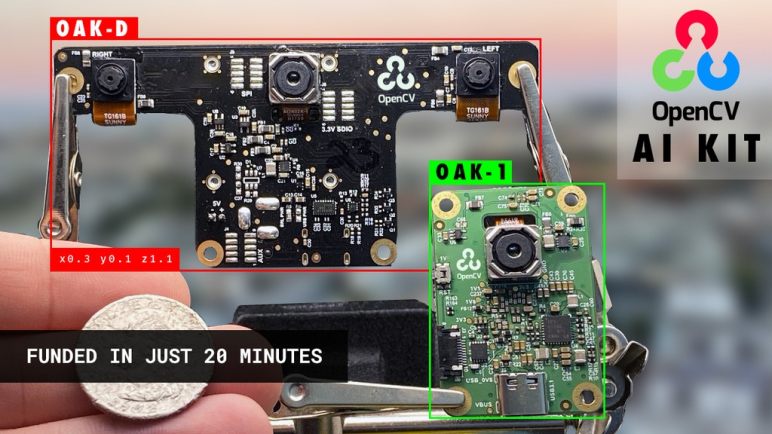

The Kickstarter is launching two related products. The first is OAK-1, a single camera backed by hardware acceleration for computer vision algorithms. This sounds like a supercharged competitor to machine vision cameras like the JeVois and OpenMV. However, it is less relevant to a mobile autonomous robot than its stablemate, the OAK-D.

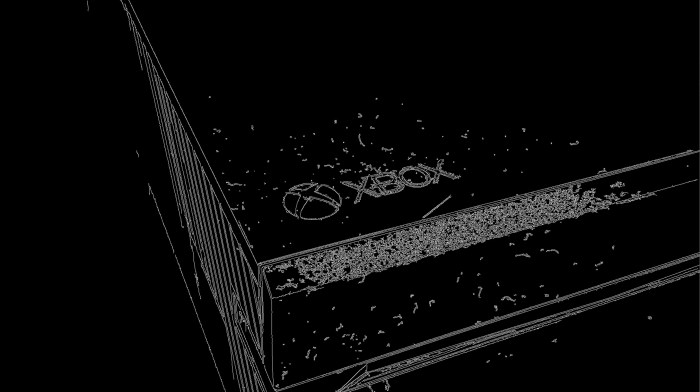

Armed with two cameras for stereoscopic vision plus a third for full color high resolution image capture, the OAK-D promises a tremendous amount of capability for (at least the current batch of backers) a relatively affordable $149. Both from relatively straightforward stereo distance calculations to more sophisticated inferences (like image segmentation) aided by that distance information.

Relatively to the $99 Google AIY Vision, the OAK-D has far more promise for helping a robot understand the structure of its environment. I hope it ships and delivers on all its promises, because then an OAK-D would become the camera of choice for autonomous robot projects, hands down. But even if not, it is still a way to capture stereo footage for calculation elsewhere, and only moderately overpriced for a three-camera peripheral. Or at least, that’s how I justified backing an OAK-D for my own experiments. The project has easily surpassed its funding goals, so now I have to wait and see if the team can deliver the product by December 2020 as promised.