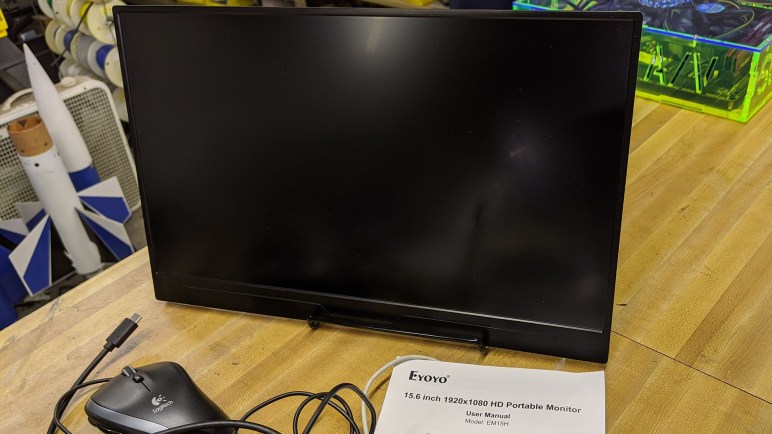

Once upon a time I decided it was a good idea to turn an old laptop screen into a portable external monitor. That was a fun project and I learned a lot, but technology has advanced and now there isn’t much point in doing the same thing again. The final nail in the coffin was the opportunity to play with an Eyoyo EM15H Portable USB-C Monitor. (*) It is just one example of a now-prolific product category that barely existed when I started my project.

Once upon a time I decided it was a good idea to turn an old laptop screen into a portable external monitor. That was a fun project and I learned a lot, but technology has advanced and now there isn’t much point in doing the same thing again. The final nail in the coffin was the opportunity to play with an Eyoyo EM15H Portable USB-C Monitor. (*) It is just one example of a now-prolific product category that barely existed when I started my project.

The key enabling technology is the growing maturity of USB-C. Yes, it’s still something of a mess, but engineers have continued working away at chasing the dream of an universal connector. For the purpose of portable monitors, the most useful feature is the ability to carry data and power on a single cable. That makes a portable monitor much easier to set up and use than my project, where I had to wrangle both power and data cables.

Another technological evolution is how thin screens have become, driven primarily by the quest for ever thinner laptop computers. This particular monitor, complete inside its plastic enclosure, is thinner than the display I used for my project without its enclosure. I know the move from CFL to LED for backlighting has something to do with it, but I’m sure that’s only part of the story. The modern product is a fraction of the size and weight of my project.

The final piece of the puzzle is a standardized way to communicate data to the monitor. Early USB external monitors worked by presenting themselves to the system as unique video devices. This required their own specific drivers, and all video processing would be done by the USB monitor. The cheap low-powered models are only useful for mostly-static use such as PowerPoint presentations. They could not handle full screen video, and provide no 3D acceleration for games.

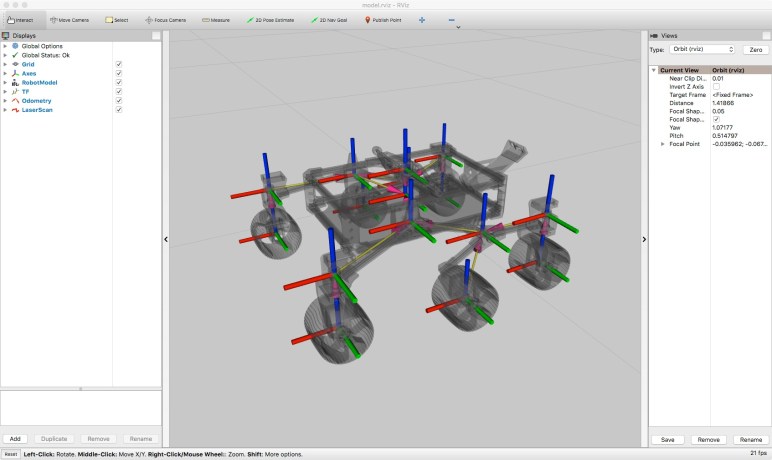

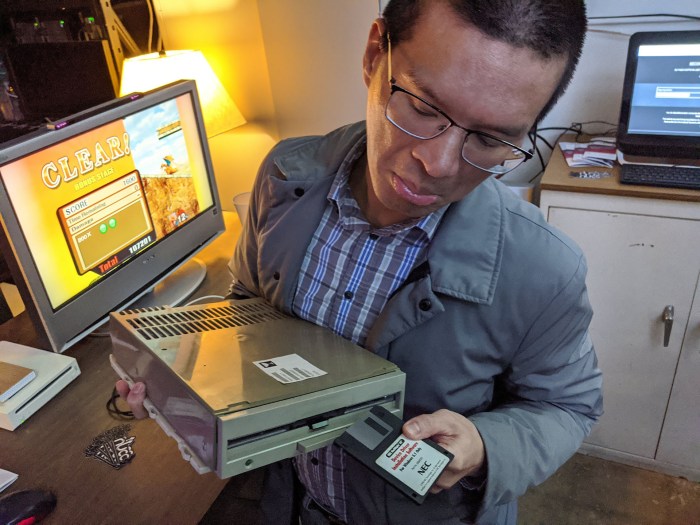

USB-C allows a better way. Supporting alternate modes like Thunderbolt means a USB-C display can leverage all graphics processing power on the computer and display just the rendered results. However, since USB-C is backwards compatible with old USB, it’s hard to be sure how a particular monitor is implemented until we test it firsthand. I connected this monitor to the USB-C port of my Dell 7577. I then loaded up a few video games with the graphics detail turned up high.

If the monitor is a dumb frame buffer video device, graphics performance would plummet or possibly not display at all. There’s no way a cheap external monitor can match the graphics performance of the NVIDIA GTX 1060 GPU inside the laptop.

But we had full graphics performance: full detail running at 60 frames per second. This is convincing proof the monitor is showing images rendered by the GPU inside the laptop. A lightweight, portable, single-cable easy-to-set-up external monitor with full performance is now a reality for about $150. (*) At that price point, I’m unlikely to build another external monitor of my own.

(*) Disclosure: As an Amazon Associate I earn from qualifying purchases.