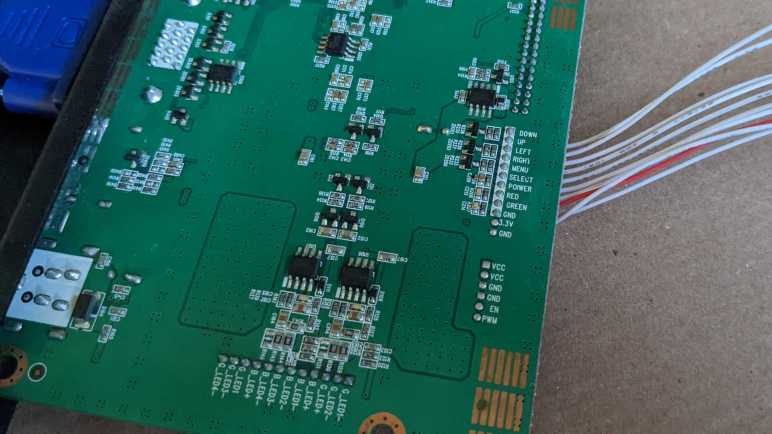

To help me understand internal workings of a Canon Pixma MX340 multi-function inkjet, I would like some signal analysis tools. Specifically for recording data coming from a quadrature encoder attached to the paper feed motor gear train. After I found out Saleae Logic could not do this, I started reading about sigrok. Thanks to a documented protocol, sigrok could run with not-officially-supported data acquisition hardware such as an ATmega328 Arduino or ESP32.

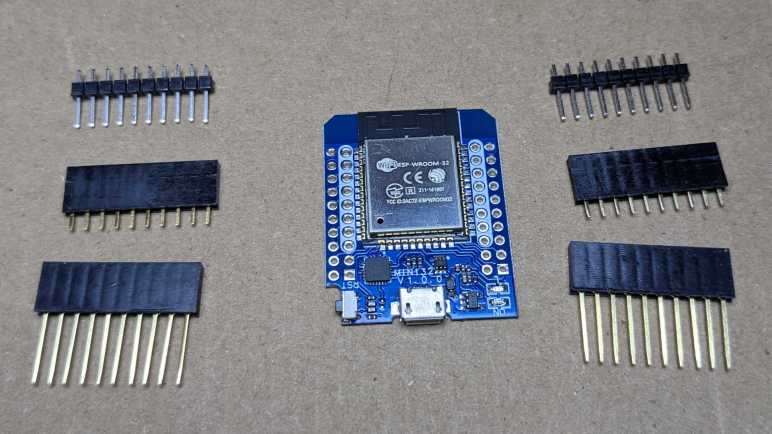

It started to look like too much of a distraction, though, so I refocused on my specific problem at hand: I just need quadrature decoding. And for that specific purpose, ESP32 has a hardware peripheral for the job. Pulse Counter (PCNT) can certainly do what its name says and count pulses on a single input, but it can be more general than that. Espressif designers had added provision for PCNT to act in response to multiple inputs and configure their interaction. One specific configuration, demonstrated in an official example, turns PCNT into a quadrature decoder.

For my purpose I need something that can keep up with quadrature phase changes of this encoder, roughly on the order of 10-20 kHz. I found a forum thread ESP32 pulse counter speed (max frequency) which says PCNT can keep up with signals up to 40MHz. That’s plenty fast for my needs!

In fact, that might be too fast. At that high rate of sensitivity, small changes — like the little dip visible in one phase when the other phase is pulled to ground — may register with PCNT and that would spell trouble. Fortunately Espressif engineers thought of that too: PCNT includes an optional glitch filter to reject signals changing outside of its configured speed range. This may be an useful tool in my toolbox if I see spurious data.

ESP32 PCNT looks like a promising approach for me to build the tool I want. I would have to install ESP-IDF (probably in VSCode Extension form) before I could compile the official sample and start modifying it for my needs. Seems pretty easy on paper, but then I realized I had an even easier option I should try first.

This teardown ran far longer than I originally thought it would. Click here to rewind back to where this adventure started.