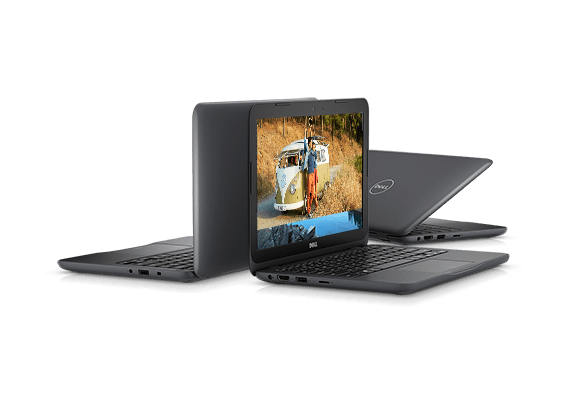

Well, I should have seen this coming. Right after I wrote I wanted to be disciplined about buying hardware, that I wanted to wait until I know what I actually needed, a temptation rises to call for a change in plans. Now I have a Dell Inspiron 11 3000 (3180) on its way even though I don’t yet know if it’ll be a good ROS brain for Sawppy the Rover.

The temptation was Dell’s Labor Day sale. This machine in its bare-bones configuration has a MSRP of $200 and can frequently be found on sale for $170-$180. To kick off their sale event, Dell made a number of them available for $130 and that was too much to resist.

This particular hardware chassis is also sold as a Dell Chromebook, so the hardware specs are roughly in line with the Chromebook comments in my previous post. We’ll start with the least exciting item: the heart is a low-end dual-core x86 CPU, an AMD E2-9000e that’s basically the bottom of the totem pole for Intel-compatible processors. But it is a relatively modern 64-bit chip enabling options (like WSL) not available on the 32-bit-only CPUs inside my Acer Aspire or Latitude X1.

The CPU is connected to 4GB of RAM, far more than the 1GB of a Raspberry Pi and hopefully a comfortable amount for sensor data processing. Main storage is listed as 32GB of eMMC flash memory which is better than a microSD card of a Pi, if only by a little. The more promising aspect of this chassis is the fact that it is also sometimes sold with a cheap spinning platter hard drive so the chassis can accommodate either type of storage as confirmed by the service manual. If I’m lucky (again), I might be able to swap it out with a standard solid state hard drive and put Ubuntu on it.

It has most of the peripherals expected of a modern laptop. Screen, keyboard, trackpad, and a webcam that might be repurposed for machine vision. The accessory that’s the most interesting for Sawppy is a USB 3 port necessary for a potential depth camera. As a 11″ laptop, it should easily fit within Sawppy’s equipment bay with its lid closed. The most annoying hardware tradeoff for its small size? This machine does not have a hard-wired Ethernet port, something even a Raspberry Pi has. I hope its on-board wireless networking is solid!

And lastly – while this computer has Chromebook-level hardware, this particular unit is sold with Windows 10 Home. Having the 64-bit edition installed from the factory should in theory allow Windows Subsystem for Linux. This way I have a backup option to run ROS even if I can’t replace the eMMC storage module with a SSD. (And not bold enough to outright destroy the Windows 10 installation on eMMC.)

Looking at the components in this package, this is a great deal: 4GB of DDR4 laptop memory is around $40 all on its own. A standalone license of Windows 10 Home has MSRP of $100. That puts us past the $130 price tag even before considering the rest of the laptop. If worse comes to worst, I could transfer the RAM module out to my Inspiron 15 for a memory boost.

But it shouldn’t come to that, I’m confident even if this machine proves to be insufficient as Sawppy’s ROS brain, the journey to that enlightenment will be instructive enough to be worth the cost.

We already have a starting point on the low end of the spectrum: Raspberry Pi. There are several other competitors in the single-board computer market, almost all of which claim to be more powerful than a Pi. But very few could match the mass volume pricing of a Pi or its software ecosystem. The last part is important because ROS runs best on something that has a port of Ubuntu, which is absent from many Pi competitors.

We already have a starting point on the low end of the spectrum: Raspberry Pi. There are several other competitors in the single-board computer market, almost all of which claim to be more powerful than a Pi. But very few could match the mass volume pricing of a Pi or its software ecosystem. The last part is important because ROS runs best on something that has a port of Ubuntu, which is absent from many Pi competitors. This points to a modern Chromebook with x86 CPU, most of which

This points to a modern Chromebook with x86 CPU, most of which  If a Chromebook proves insufficient, it’ll likely be due to the low-end CPU. Where we go beyond that will depend on the nature of the work overloading the chip. If it’s in the arena of dedicated vision or other AI-related processing capabilities, we might move to something like

If a Chromebook proves insufficient, it’ll likely be due to the low-end CPU. Where we go beyond that will depend on the nature of the work overloading the chip. If it’s in the arena of dedicated vision or other AI-related processing capabilities, we might move to something like  For less task-specific needs, the

For less task-specific needs, the  The previous blog post outlined some points of concern against using

The previous blog post outlined some points of concern against using

As investigation into ROS continues, it’s raising concern that a self-contained autonomous robot will likely need a brain more powerful than a Raspberry Pi. It’s a very capable little computing platform and worked well serving as Sawppy’s brain when operated as a remote-control vehicle. But when the rover needs to start thinking on its own, would a little single-board computer

As investigation into ROS continues, it’s raising concern that a self-contained autonomous robot will likely need a brain more powerful than a Raspberry Pi. It’s a very capable little computing platform and worked well serving as Sawppy’s brain when operated as a remote-control vehicle. But when the rover needs to start thinking on its own, would a little single-board computer

The people at Robotis who created

The people at Robotis who created

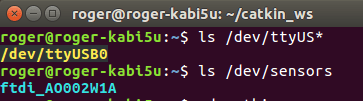

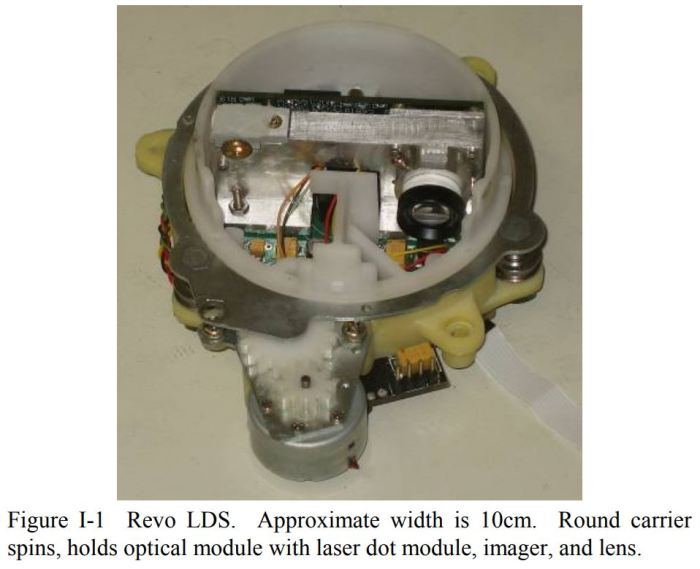

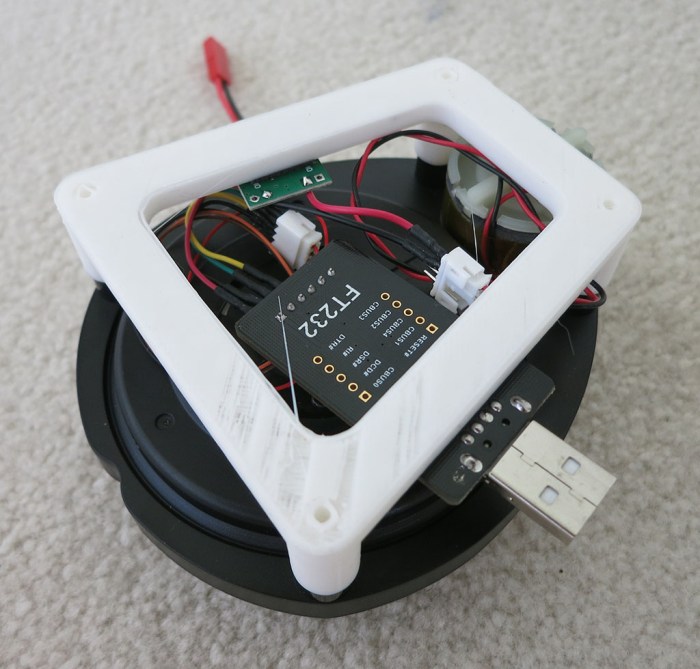

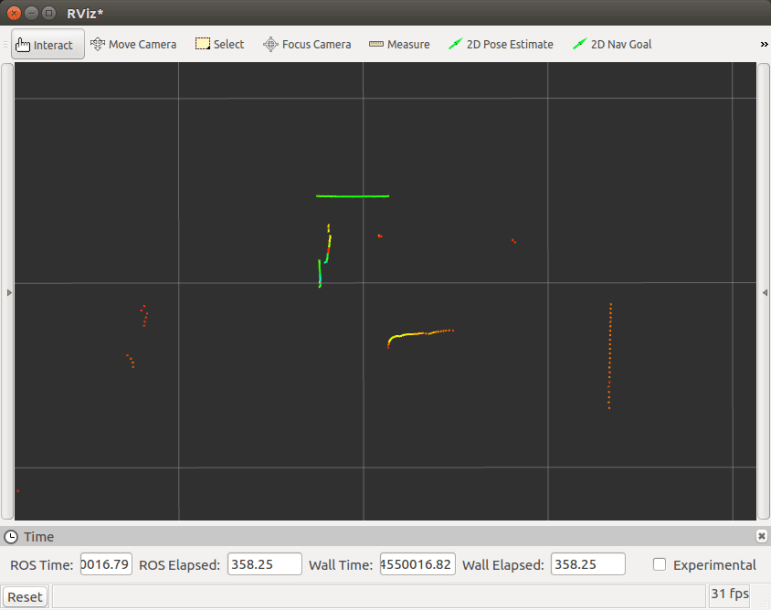

I bought a laser scanner salvaged from a Neato robot vacuum

I bought a laser scanner salvaged from a Neato robot vacuum

The low-end option is the “

The low-end option is the “ The higher-end option is the “

The higher-end option is the “

While we’re on the topic of things I wanted to investigate in the future… there was a recent announcement declaring availability of

While we’re on the topic of things I wanted to investigate in the future… there was a recent announcement declaring availability of  Being on the leading edge carries its own kind of thrill. When I started looking over the TurtleBot 3 manual I noticed the index listed a “

Being on the leading edge carries its own kind of thrill. When I started looking over the TurtleBot 3 manual I noticed the index listed a “