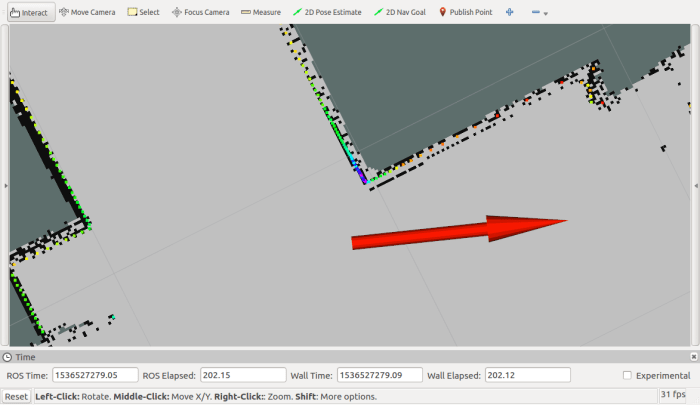

A little real-world experience with Phoebe has revealed several problems with my first rough draft chassis design. The second problem on the list is Phoebe’s LIDAR height: It sits too high to detect certain obstacles like an office chair. In the picture below, Phoebe has entered a perilous situation without realizing it. The LIDAR’s height meant Phoebe only sees the chair’s center post, thinking it is a safe distance away, blissfully ignorant of chair legs that have completely blocked its path.

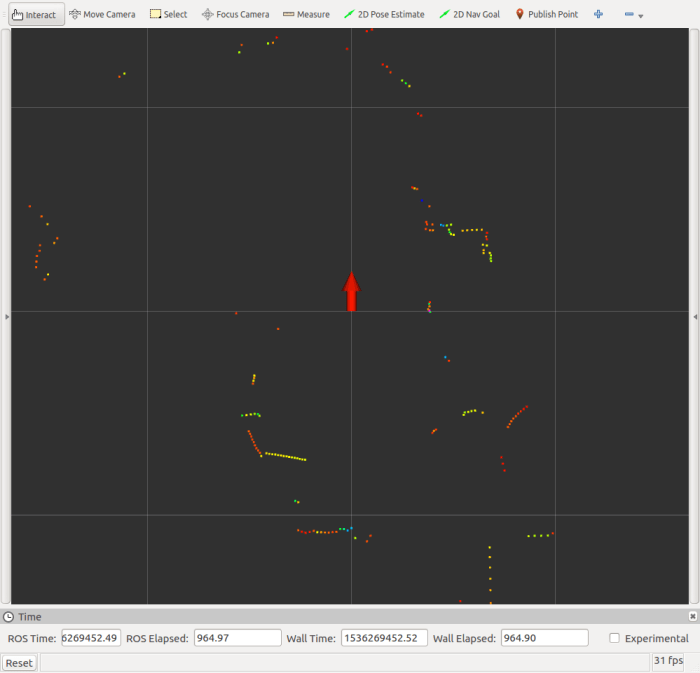

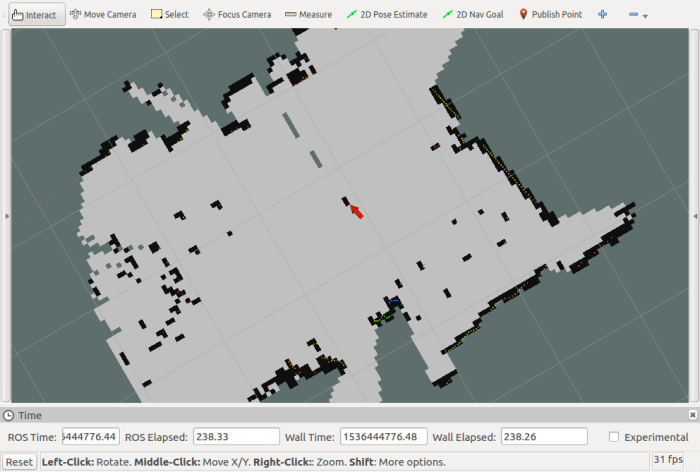

Here is the RViz plot of the situation, representing Phoebe’s perspective. A red arrow indicates Phoebe’s position and direction. Light gray represents cells in the map occupancy grid thought to be open space, and black are cells that are occupied by an obstacle. Office chair’s center post is represented by two black squares at the tip of the red arrow, and the chair’s legs are absent because Phoebe never saw them with LIDAR.

This presents obvious problems in collision avoidance as Phoebe can’t avoid chair legs that it can’t see. Mounting height for Phoebe’s LIDAR has to be lowered in order to detect office chair legs.

Now that I’ve seen this problem firsthand, I realize it would also be an issue for TurtleBot 3 Burger. It has a compact footprint, and its parts are built upwards. This meant it couldn’t see office chair legs, either. But that’s OK as long as the robot is constrained to environments where walls are vertical and tall, like the maze seen in TurtleBot 3 Navigation demo. Phoebe would work well in such constrained environments too, but I’m not interested in constrained environments. I want Phoebe to roam my house.

Which leads us to Waffle Pi, the other TurtleBot 3 model. it has a larger footprint than the Burger, but it is a squat shape allowing LIDAR to be mounted lower and still have a clear view all around the top of the robot.

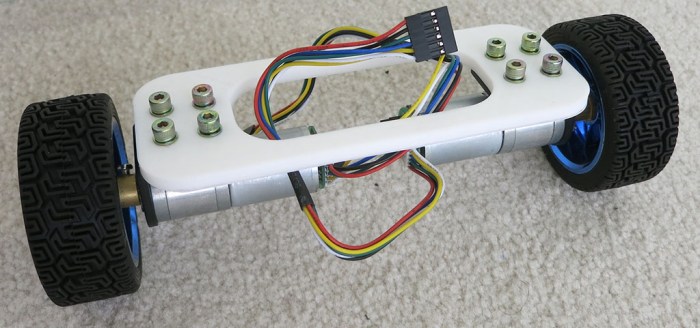

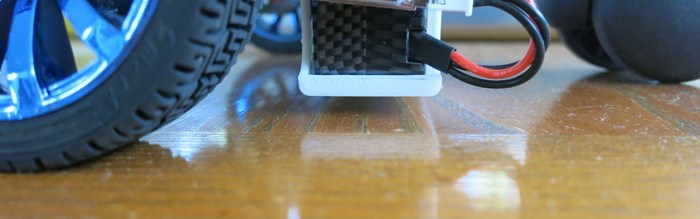

So I need to raise the bottom of Phoebe for ground clearance, and also lower the top for LIDAR mount. If the LIDAR can be low enough to look just over the top of the wheels, that should be good enough to see an office chair’s legs. Will I find a way to fit all of Phoebe’s components into this reduced height range? That’s the challenge at hand.

(Cross-posted to Hackaday.io)

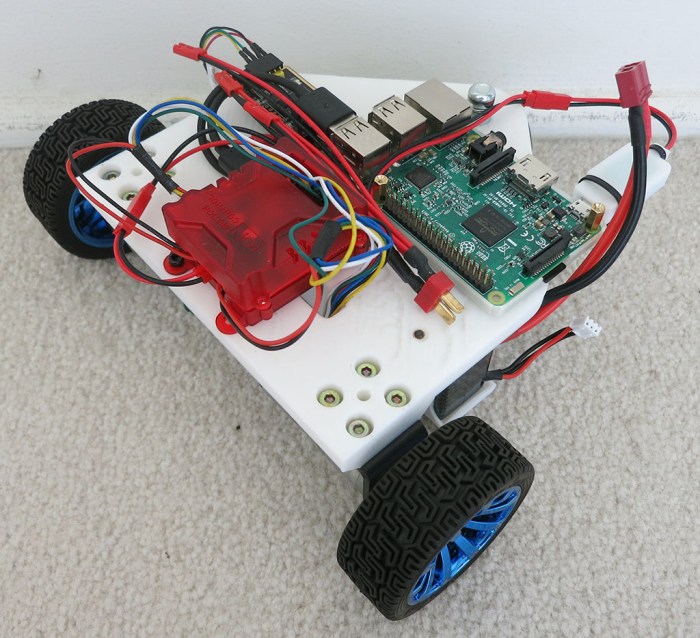

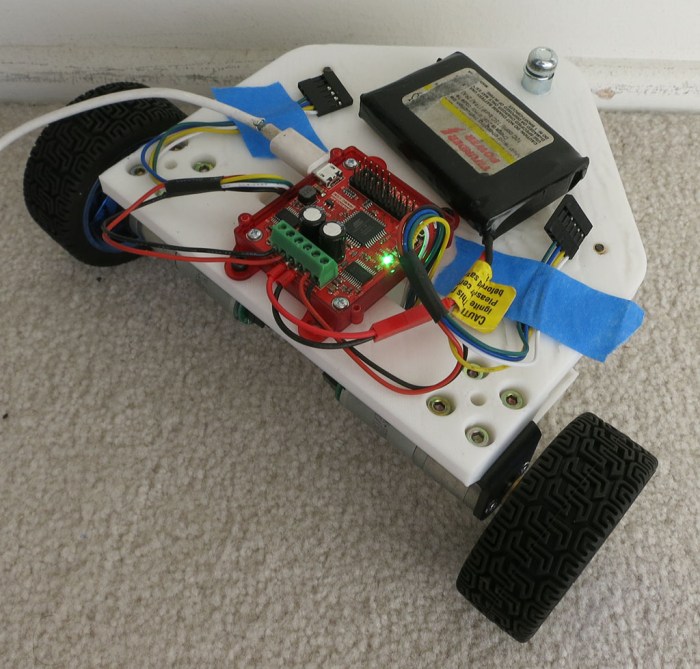

I’m making good progress on integrating ROS into my TurtleBot variant called Phoebe. I’ve just configured RViz to plot Phoebe’s location as reported by

I’m making good progress on integrating ROS into my TurtleBot variant called Phoebe. I’ve just configured RViz to plot Phoebe’s location as reported by