The great thing about ROS is that it is a popular and open platform for everyone to work with, resulting in a large ecosystem of modules that cover a majority of robot hardware. But being so open to everyone does have a few downsides, because not everyone has the same resources to develop and maintain their ROS modules. This means some modules fall out of date faster than others, or works with its author’s version of hardware but not another version.

Such turned out to be the case for the existing neato_robot ROS package, which was created years ago and while the author kept updated it through four ROS releases, that maintenance effort stopped and so this code is now five releases behind latest ROS. That in itself is not necessarily a problem – Open Source Robotics Foundation tries to keep ROS backwards compatible as much as they can – but it does mean potential for hidden gotchas.

When I followed instructions for installation and running, the first error message was about configuring the serial port which was fine. But after that… nothing. No information published to /odom or /base_scan, and no response to command sent to /cmd_vel.

Digging into the source code, the first thing to catch my attention was that the code opened a serial port for communication but did not set provision for timeout. Any problem in serial communication will cause it to hang, which is exactly what it is doing now. Adding a timeout is a quick and easy way to test if this is actually related.

Existing:

self.port = serial.Serial(port,115200)

Modified:

self.port = serial.Serial(port,115200,timeout=0.01)

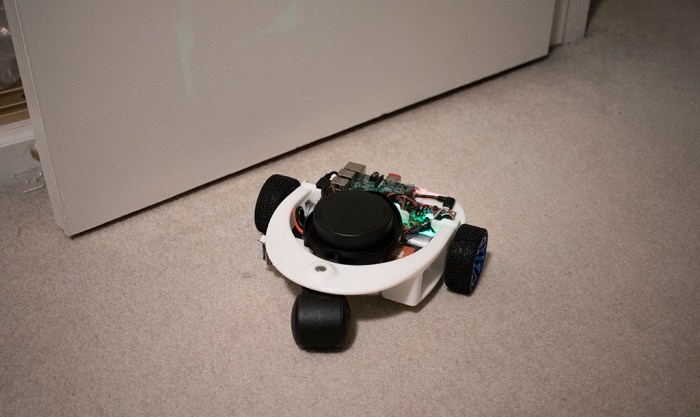

And that was indeed helpful, restoring nominal functionality to the ROS node. I could now drive the robot vacuum by sending commands to /cmd_vel and I can see a stream of data published to /odom and /base_scan.

I briefly contemplated being lazy and stopping there, but I thought I should try to better understand the failure. Onward to debugging!

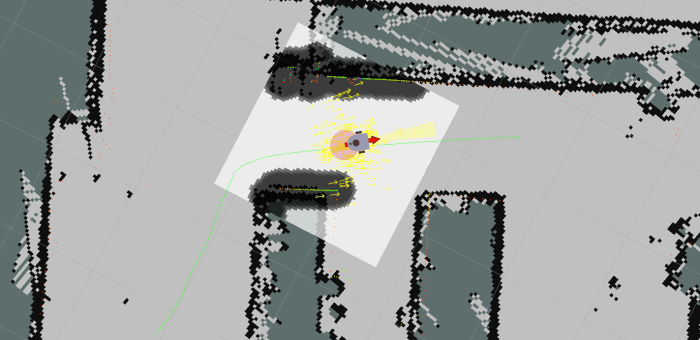

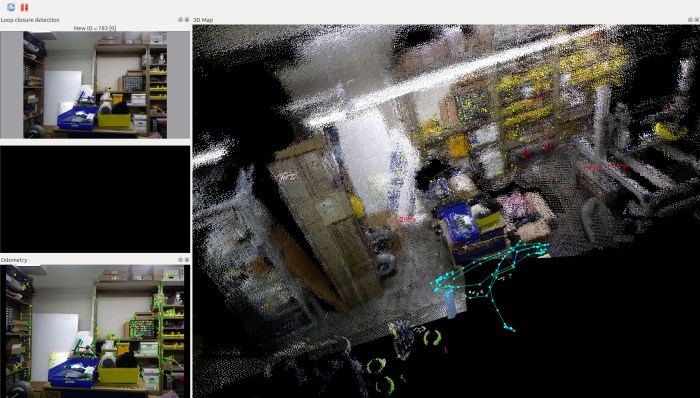

Depth sensor data from a Xbox 360 Kinect

Depth sensor data from a Xbox 360 Kinect  RTAB stands for Real-Time Appearance-Based, which neatly captures the constraint (fast enough to be real-time) and technique (extract interesting attributes from appearance) of how the algorithm approaches mapping an environment. I learned of this algorithm by reading the research paper behind

RTAB stands for Real-Time Appearance-Based, which neatly captures the constraint (fast enough to be real-time) and technique (extract interesting attributes from appearance) of how the algorithm approaches mapping an environment. I learned of this algorithm by reading the research paper behind

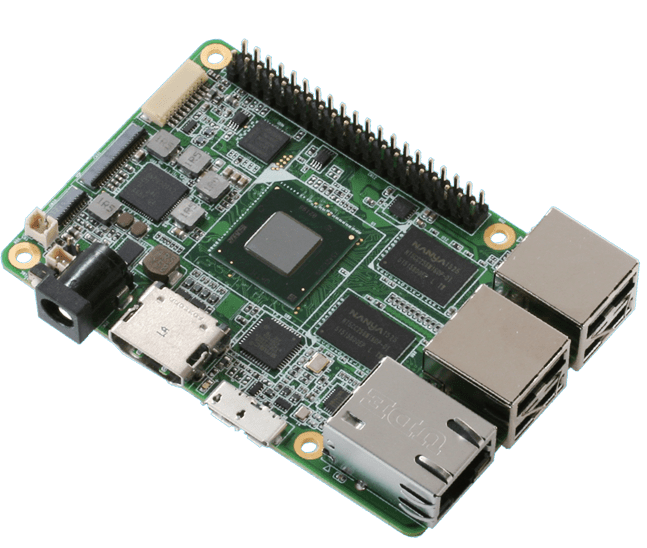

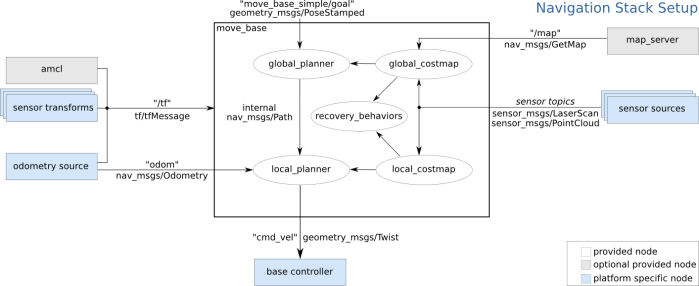

At this point my research has led me to ROS modules

At this point my research has led me to ROS modules  One of the ways a TurtleBot makes ROS easier and more approachable for beginners is by simplifying a robot’s world into two dimensions. It’s somewhat like the introductory chapters of a physics textbook, where all surfaces are friction-less and all collisions are perfectly inelastic. The world of a TurtleBot is perfectly flat and all obstacles have an infinite height. This simplification allows the robot’s environment to be represented as a 2D array called an occupancy grid.

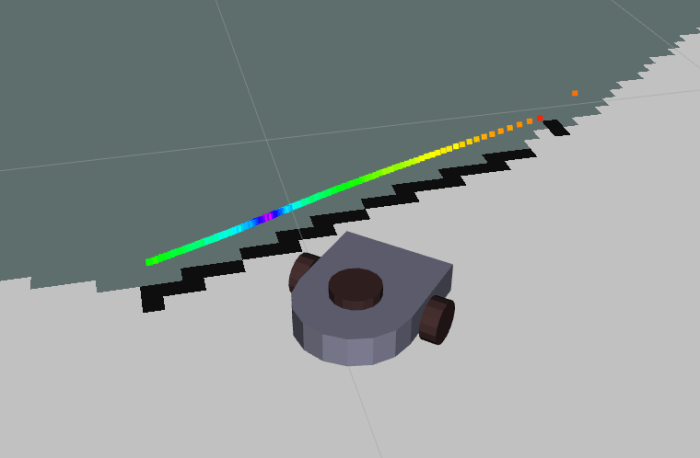

One of the ways a TurtleBot makes ROS easier and more approachable for beginners is by simplifying a robot’s world into two dimensions. It’s somewhat like the introductory chapters of a physics textbook, where all surfaces are friction-less and all collisions are perfectly inelastic. The world of a TurtleBot is perfectly flat and all obstacles have an infinite height. This simplification allows the robot’s environment to be represented as a 2D array called an occupancy grid.