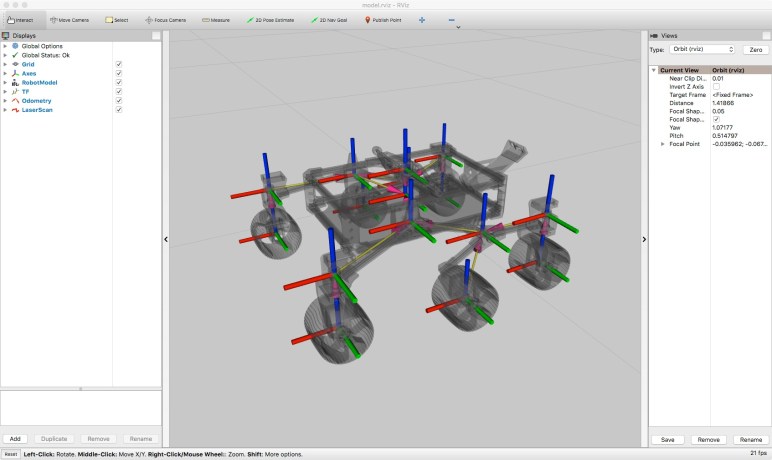

The ex-Luggable Mark II is up and running Folding@Home, chewing through work units quickly mostly thanks to its RTX 2070 GPU. An old Windows 8 convertible tablet/laptop is also up and running as fast as it can, though its best speed is far slower than the ex-Luggable. The next recruit for my folding army is Luggable PC Mark I, pulled out of the closet where it had been gathering dust.

My old AMD Radeon HD 7950 GPU was installed in Luggable PC Mark I. It is quite old now and AMD stopped releasing Ubuntu drivers after Ubuntu 14. Given its age I’m not sure if it even works for GPU folding workloads. It was designed and released near the dawn of the age when GPUs started finding work beyond rendering game screens, and its GCN1 architecture probably had problems typical of first versions of any technology.

Fortunately I also have an AMD Radeon R9 380 available. It was formerly in Luggable PC Mark II but during the luggable chassis decommissioning I retired it in favor of a NVIDIA RTX 2070. The R9 380 is a few years younger than the HD 7950, I know it supports OpenCL, and AMD has drivers for Ubuntu 18.

A few minutes of wrenching removed the HD 7950 from Luggable Mark I, putting the R9 380 in its place, and I started working out how to install those AMD Ubuntu drivers. According to this page, the “All-Open stack” is recommended for consumer products, which I mean to include my consumer-level R9 380 card. So the first pass started by running amdgpu-install. To verify OpenCL is up and running, I installed clinfo to verify GPU is visible as OpenCL device.

Number of platforms 0

Hmm. That didn’t work. On advice of this page on Folding@Home forums, I also ran sudo apt install ocl-icd-opencl-dev That had no effect, so I went back to reread the instructions. This time I noticed the feature breakdown chart between “All-Open” and “Pro” and OpenCL is listed as a “Pro” only feature.

So I uninstalled “All-Open” and installed “Pro” stack. Once installed and rebooted, clinfo still showed zero platforms. Returning to the manual, on a different page I found the fine print saying OpenCL is an optional component of the Pro stack. So I reinstalled yet again, this time with --opencl=pal,legacy flag.

Running clinfo now returns:

Number of platforms 1

Platform Name AMD Accelerated Parallel Processing

Platform Vendor Advanced Micro Devices, Inc.

Platform Version OpenCL 2.1 AMD-APP (3004.6)

Platform Profile FULL_PROFILE

Platform Extensions cl_khr_icd cl_amd_event_callback cl_amd_offline_devices

Platform Host timer resolution 1ns

Platform Extensions function suffix AMD

Platform Name AMD Accelerated Parallel Processing

Number of devices 0

NULL platform behavior

clGetPlatformInfo(NULL, CL_PLATFORM_NAME, ...) No platform

clGetDeviceIDs(NULL, CL_DEVICE_TYPE_ALL, ...) No platform

clCreateContext(NULL, ...) [default] No platform

clCreateContext(NULL, ...) [other] <error: no devices in non-default plaforms>

clCreateContextFromType(NULL, CL_DEVICE_TYPE_DEFAULT) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_CPU) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_GPU) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_ACCELERATOR) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_CUSTOM) No devices found in platform

clCreateContextFromType(NULL, CL_DEVICE_TYPE_ALL) No devices found in platform

Finally, some progress. This is better than before, but zero devices is not good. Back to the overview page which says their PAL OpenCL stack supported their Vega 10 and later GPUs. My R9 380 is from their Tonga GCN 3 line, which is quite a bit older than Vega, which is GCN 5. So I’ll reinstall with --opencl=legacy to see if it makes a difference.

It did not. clinfo still reports zero OpenCL devices. AMD’s GPU compute initiative is called ROCm or RadeonOpenCompute but it is restricted to hardware newer than what I have on hand. Getting OpenCL up and running, on Ubuntu, on hardware this old, is out of scope for attention from AMD.

This was the point where I decided I was tired of this Ubuntu driver dance. I wiped the system drive to replace Ubuntu with Windows 10 along with AMD Windows drivers. Folding@Home saw the R9 380 as a GPU compute slot, and I was up and running simulating protein folding. The Windows driver also claimed to support my older 7950, so one potential future project would be to put both of these AMD GPUs in a single system. See if the driver support extends to GPU compute for multi GPU folding.

For today I’m content to have just my R9 380 running on Windows. Ubuntu may have struck out on this particular GPU compute project, but it works well for CPU compute, especially virtual machines.