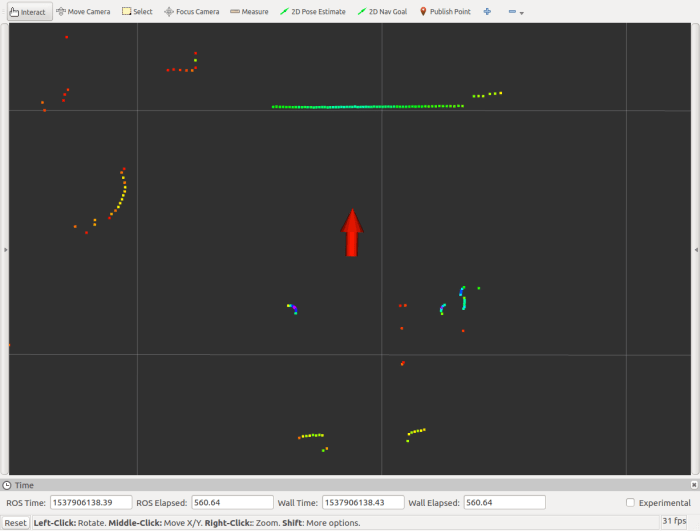

Now that I’ve decided to bring up the ROS navigation stack for Phoebe, where do I start? Well, the ROS Wiki page for the subject is always a good place to start, as they tend to have a tutorial for the subject. ROS navigation is no exception.

The first recommended page is actually a familiar sight – the brief overview on tf was required reading back when I first assembled the chassis. At the time, I could get away with a very simple static publisher, because I just had to tell ROS how and where my Neato LIDAR is mounted on my robot chassis. But now I guess I need to advanced to the next step and publish robot state. And this means describing Phoebe in more detail for ROS using a XML syntax called URDF (Unified Robot Descriptor Format).

So in order to bring up ROS navigation on Phoebe, the navigation wiki page has pointed me to robot state publisher and also the ROS URDF Tutorial. To learn one thing I had to learn another, the typical bootstrap process when learning something new.

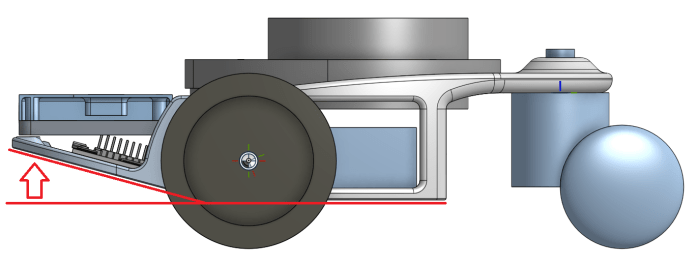

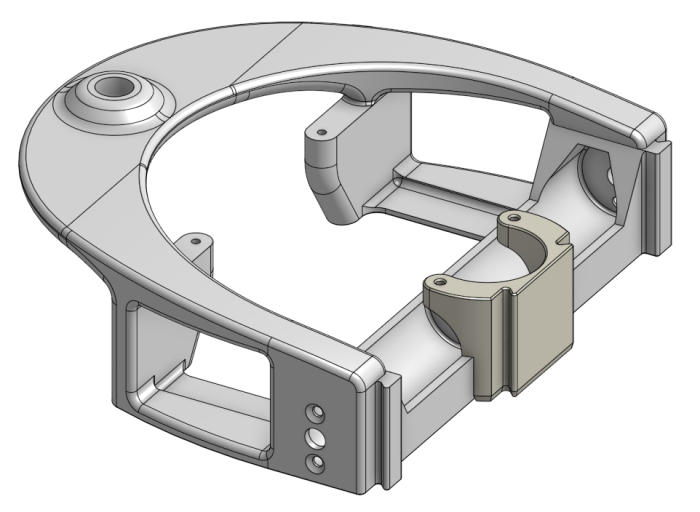

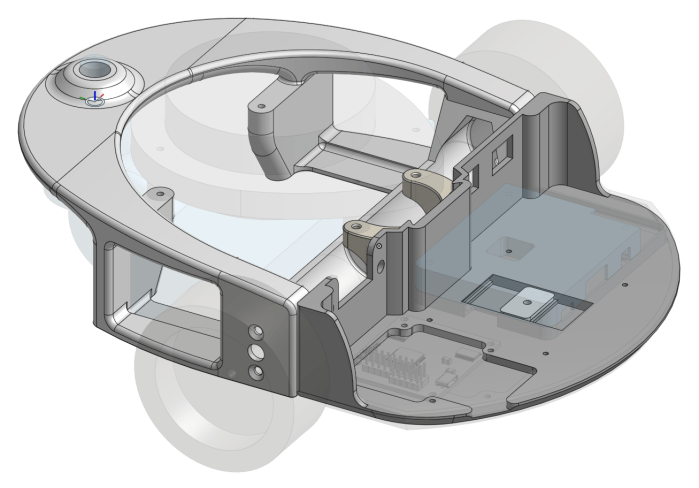

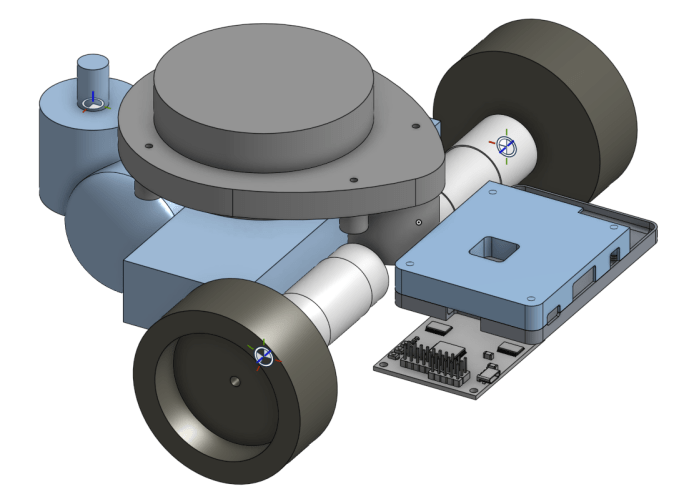

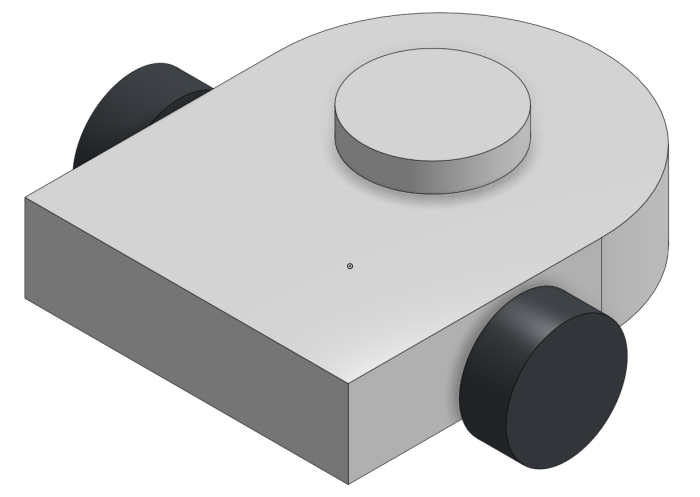

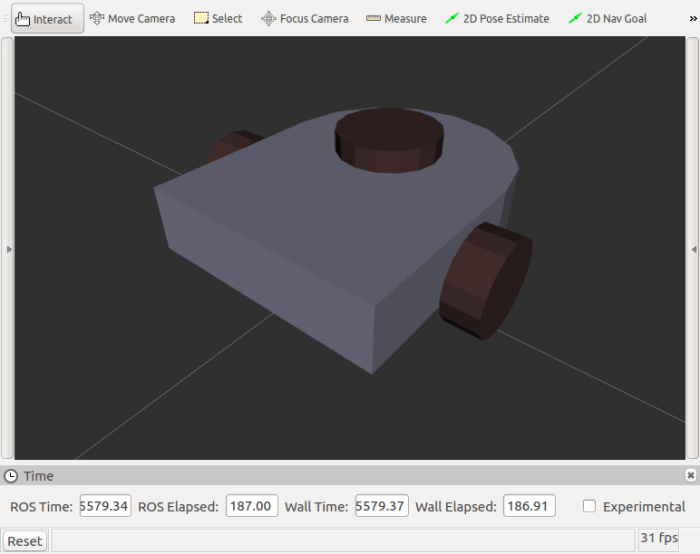

For the purposes of robot physics simulation, the robot should be described using very basic geometry: a combination of rectangular solids, cylinders, and spheres. This keeps the computation workload for collision detection simple. While the visual representation can be more complex than the collision detection representation, it doesn’t have to be. So for this first draft, I’ll just do a super simple Phoebe for visual representation, suitable for use in collision calculations if I get into that later.

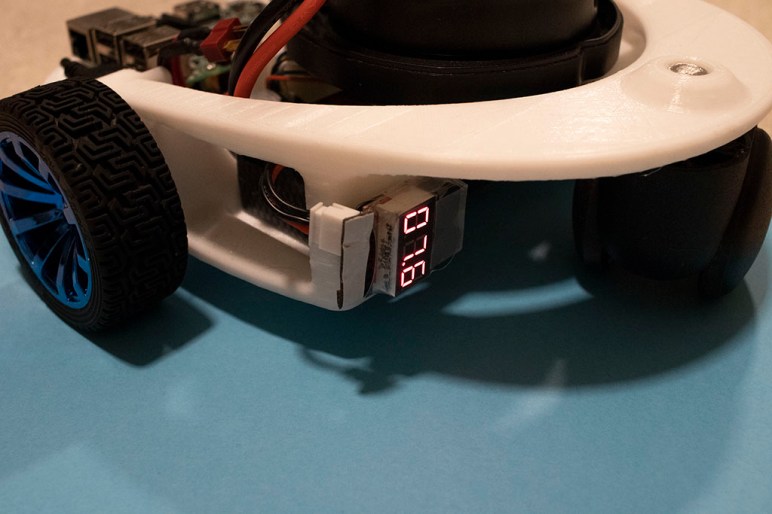

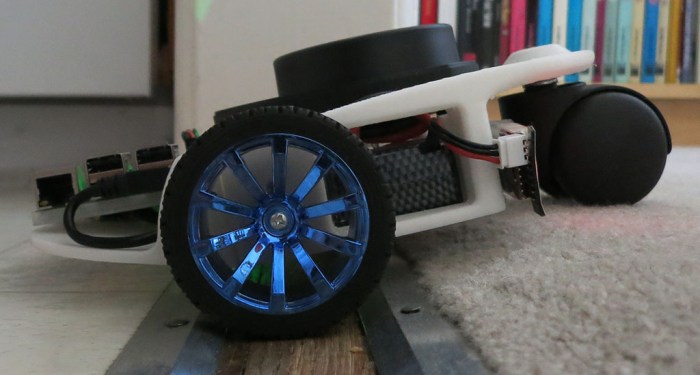

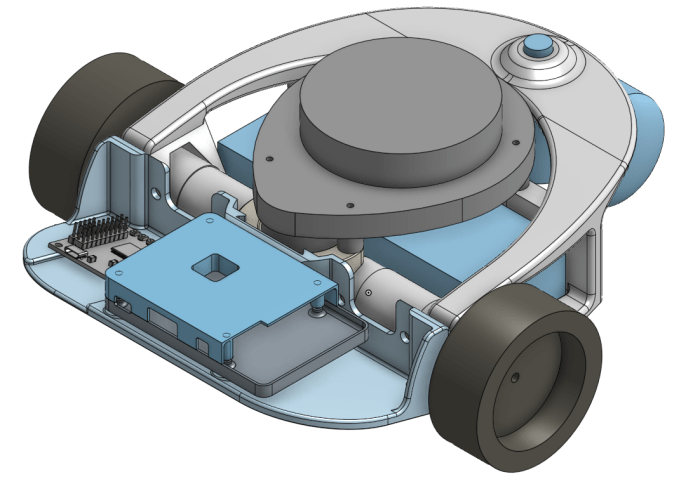

I started with Phoebe’s Onshape CAD file.

Taking the critical dimensions, I created a simplified version in Onshape CAD using just rectangular boxes and cylinders. This exercise makes it a fairly straightforward exercise to translate into URDF.

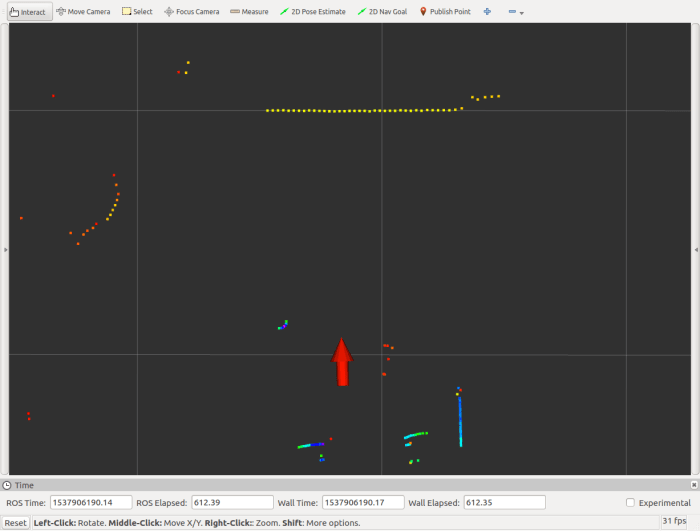

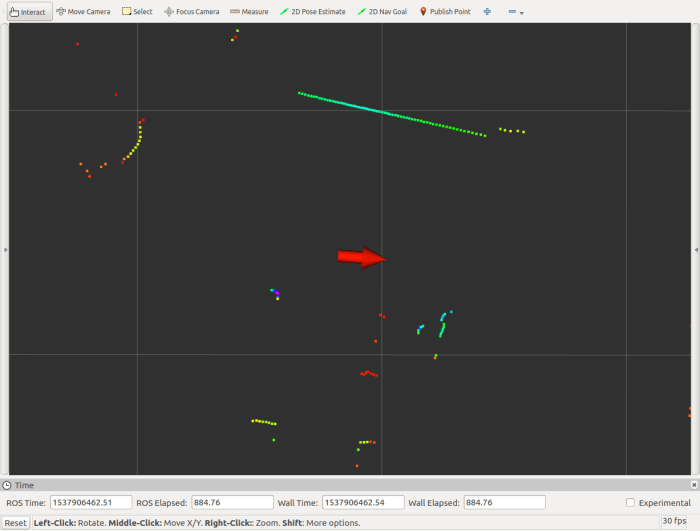

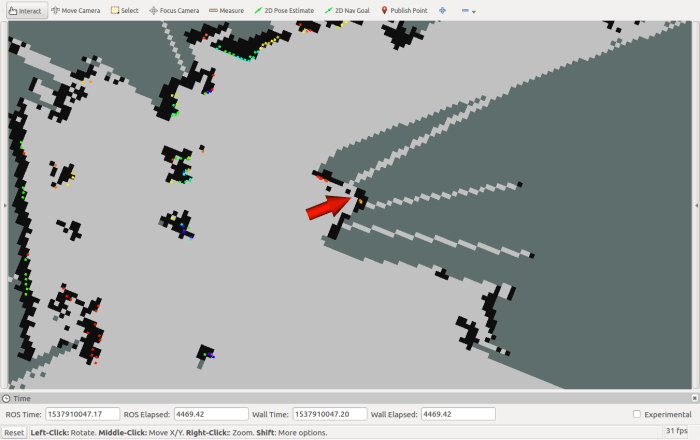

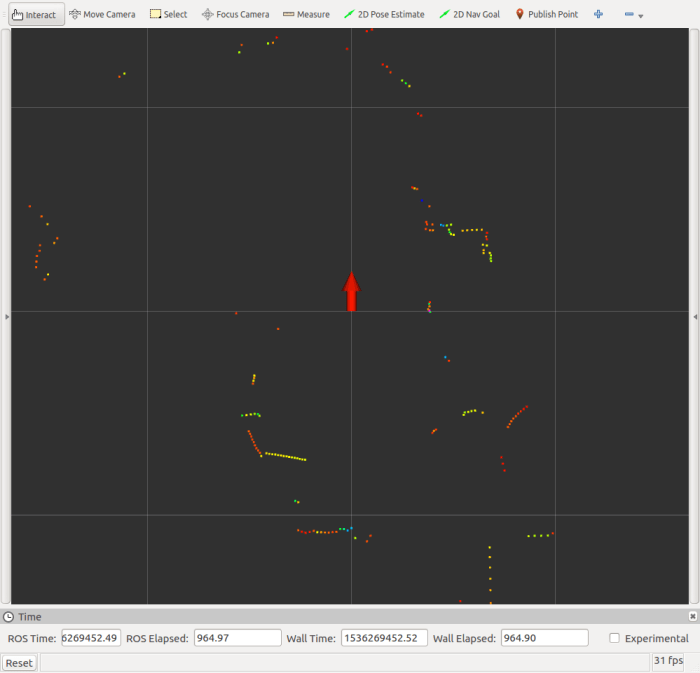

By measuring the dimensions in CAD, I could declare a few primitives with URDF and see what it looks like in RViz for comparison against CAD. Once the visual appearance is roughly correct, it’s time to tune the details and make sure they work for ROS functional purposes.

(Cross-posted to Hackaday.io)

When I started working on my own TurtleBot variant (before I even decided to call it Phoebe) my intention was to build a hardware platform to get first hand experience with ROS fundamentals. Phoebe’s

When I started working on my own TurtleBot variant (before I even decided to call it Phoebe) my intention was to build a hardware platform to get first hand experience with ROS fundamentals. Phoebe’s