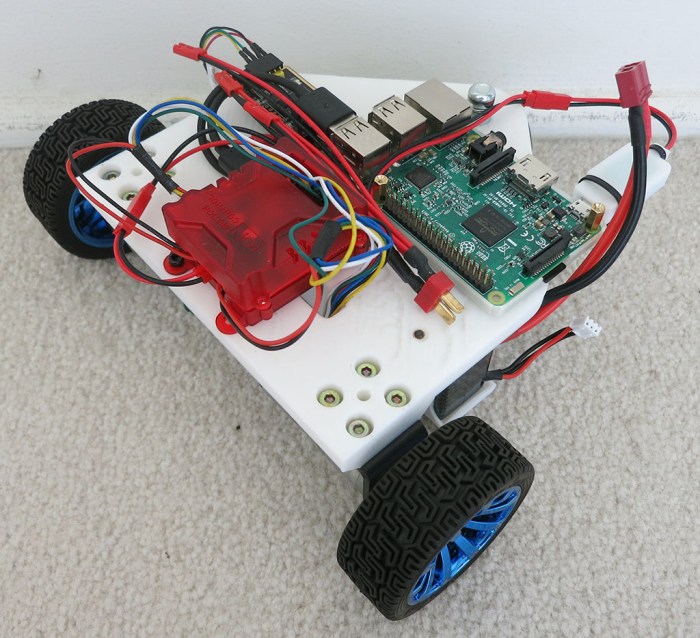

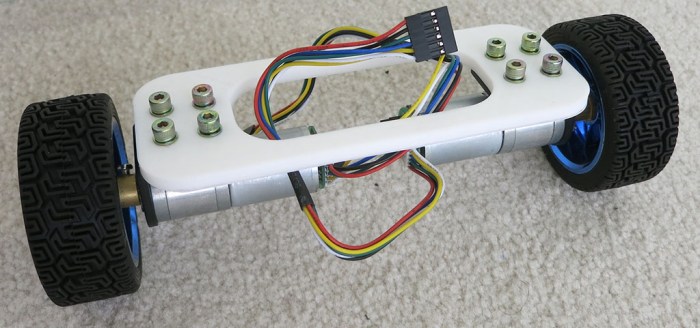

With the motors connected to Roboclaw, their direction and encoder in sync, and PID values tuned, Phoebe can be driven around via ROS /cmd_vel topic and report its movement via /odom. However, Phoebe has no awareness of its surroundings, which is where the LIDAR module comes in.

Salvaged from a Neato robot vacuum (and bought off eBay), it is the final major component to be installed on Phoebe. Since this is a rough first draft, the most expedient way to install the device is to drill a few holes for M3 standoffs, and mount the module on top of them. This allows the module clear lines of sight all around the robot, while sitting level with the ground. It is also installed as close to the center of the robot as practical. I don’t know if a center location is critical, but intuitively it seems to be a good thing to have. We’ll know more once we start driving it around and see what it does.

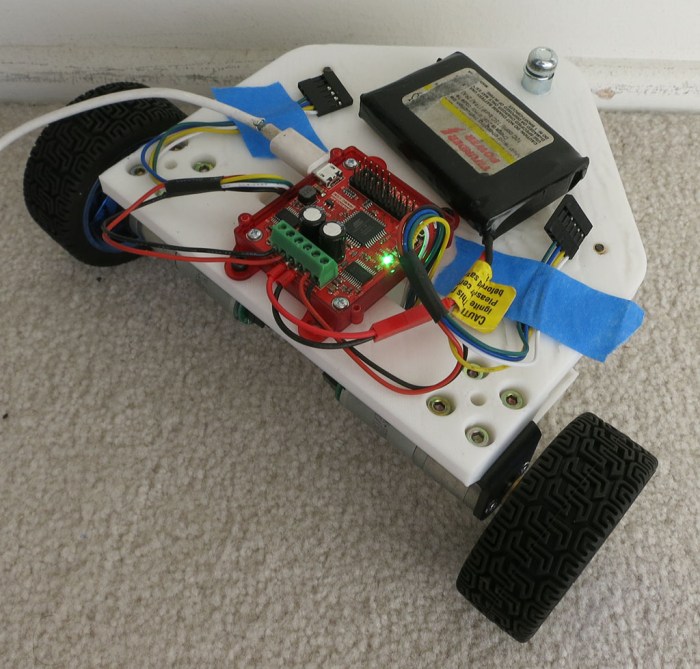

By this point the rough draft nature of the project is very visible. The LIDAR spin motor sticks out below the module the furthest, and the motor inadvertently sits right on top of the Raspberry Pi’s Ethernet port, which is the tallest point on a Pi. Raising the LIDAR high enough so they don’t collide left a lot of empty space between the two modules. Which is not wasted at the moment, because the wiring mess is getting out of control and could use all the space it can occupy.

The next version should lay things out differently to make everything neater. In the meantime, it’s time to see if we can drive this robot around and watch its LIDAR plot. And once that basic process has been debugged, that should be everything necessary to enable ROS projects to give Phoebe some level of autonomy.

(Cross-posted to Hackaday.io)

As investigation into ROS continues, it’s raising concern that a self-contained autonomous robot will likely need a brain more powerful than a Raspberry Pi. It’s a very capable little computing platform and worked well serving as Sawppy’s brain when operated as a remote-control vehicle. But when the rover needs to start thinking on its own, would a little single-board computer

As investigation into ROS continues, it’s raising concern that a self-contained autonomous robot will likely need a brain more powerful than a Raspberry Pi. It’s a very capable little computing platform and worked well serving as Sawppy’s brain when operated as a remote-control vehicle. But when the rover needs to start thinking on its own, would a little single-board computer  The most obvious point of concern is the low power ARM CPU. The raw processing capability of the chip is actually fairly respectable, and probably won’t be the biggest problem. But there are two downsides with the chip:

The most obvious point of concern is the low power ARM CPU. The raw processing capability of the chip is actually fairly respectable, and probably won’t be the biggest problem. But there are two downsides with the chip:

Back in January of this year I joined a team of pre-release beta testers for a project out of nearby Jet Propulsion Laboratory (JPL). While not exactly a state secret, we were asked not to overtly broadcast or advertise the project until after JPL’s own publicity office started doing so. This

Back in January of this year I joined a team of pre-release beta testers for a project out of nearby Jet Propulsion Laboratory (JPL). While not exactly a state secret, we were asked not to overtly broadcast or advertise the project until after JPL’s own publicity office started doing so. This