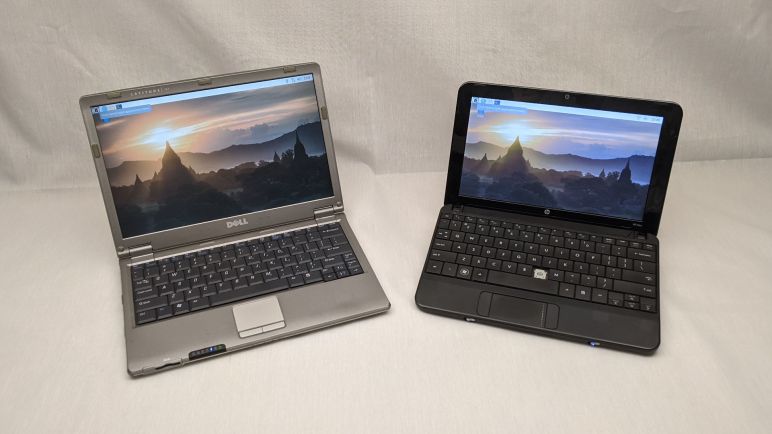

When I looked over LabVIEW earlier with the eyes of a maker, my biggest stumbling block was trying to connect to the kind of hardware a maker would play with. LabVIEW has a huge library of hardware interface components for sophisticated professional level electronics instrumentation. But I found nothing for simple one-off projects like the kind I have on my workbench. I got as far as finding a reference to a mystical “Direct I/O” mechanism, but no details.

In hindsight, that was perfectly reasonable. I was browsing LabVIEW information presented on their primary site targeted to electronics professionals. I thought the lack of maker-friendly information meant National Instruments didn’t care about makers, but I was wrong. It actually meant I was not looking at the right place. LabVIEW’s maker-friendly face is on an entirely different site, the LabVIEW MakerHub.

Here I learned about LINX, an architecture to interface with maker level hardware starting with the ubiquitous Arduino, Raspberry Pi, and extensible to others. From the LINX FAQ and the How LINX Works page I got the impression it allows individual LabVIEW VI (virtual instrument) to correspond to individual pieces of functionality on an Arduino. But very importantly, it implies that representation is distinct from the physical transport layer, where there’s only one serial (or WiFi, or Ethernet) connection between the computer running LabVIEW and the microcontroller.

If my interpretation is true this is a very powerful mechanism. It allows the bulk of LabVIEW program to be set up without worrying about underlying implementation. Here’s one example that came to mind: A project can start small with a single Arduino handling all hardware interface. Then as the project grows, and the serial link becomes saturated, functions can be split off into separate Arduinos with their own serial link plugged in to the computer. Yet doing so would not change the LabVIEW program.

That design makes LabVIEW much more interesting. What dampens my enthusiasm is the lack of evidence of active maintenance on LabVIEW MakerHub. I see support for BeagleBone Black, but not any of the newer BeagleBone boards (Pocket is the obvious candidate.) The list of supported devices list Raspberry Pi only up to 2, Teensy only up to 3.1, Espressif ESP8266 but not the ESP32, etc. Balancing that discouraging sight is that the code is on Github, and we see more recent traffic there as well as the MakerHub forums. So it’s probably not dead?

LINX looks very useful when the intent is to interface with LabVIEW on the computer side. But when we want something on the computer other than LabVIEW, we can use Firmata which is another implementation of the concept.

UPDATE: And just after I found it (a few years after it launched) NI is killing MakerHub with big bold red text across the top of the site: “This site will be deprecated on August 1, 2020″