After deciding I should learn to use FreeRTOS as part of my ESP32 projects toolbox, I read through the free e-Book PDF. I don’t understand all of it yet, but it built a foundation. Enough for me to start a practice project using some basic FreeRTOS features. What’s the first thing I did? What we always do in embedded hardware: blink a LED!

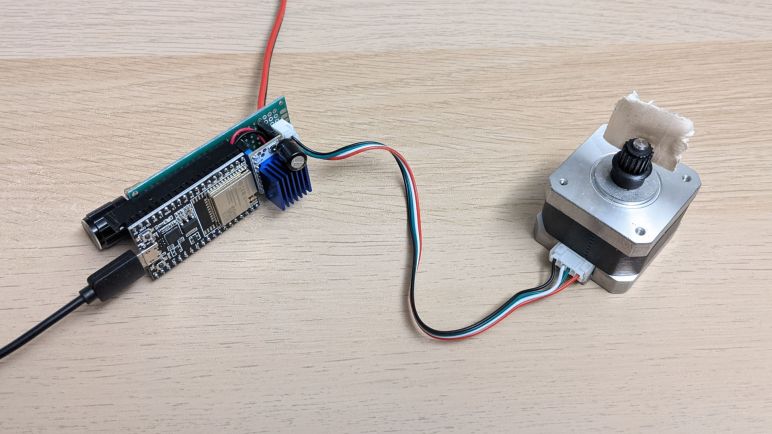

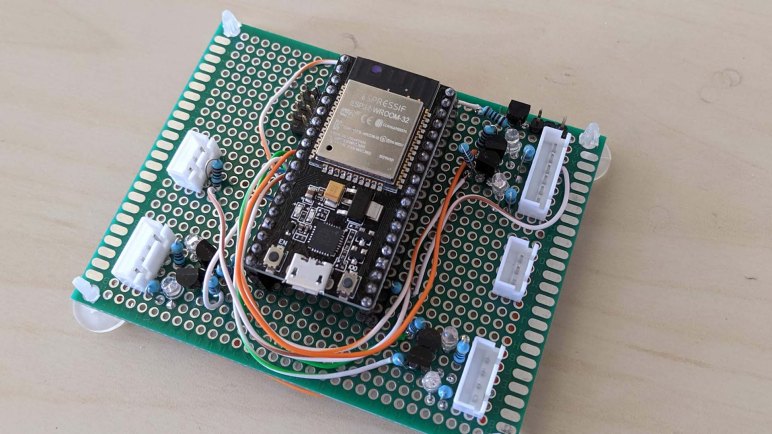

I’ve used this particular ESP32, mounted on this pink breadboard, for several projects. I had a few external LEDs configured on this pink breadboard for experimentation, and that was because I somehow never noticed that there was a second LED available for direct use on my ESP32 dev module. I knew there was a red one to indicate power, but I didn’t notice the blue one until this project. Apparently this particular ESP32 development board (*) is not a direct clone of Espressif’s official ESP32 DevKitC, because that had only one red LED to indicate power. I have no idea how popular this particular two-LED layout is among ESP32 development boards, I’ll have to keep an eye out as I buy more.

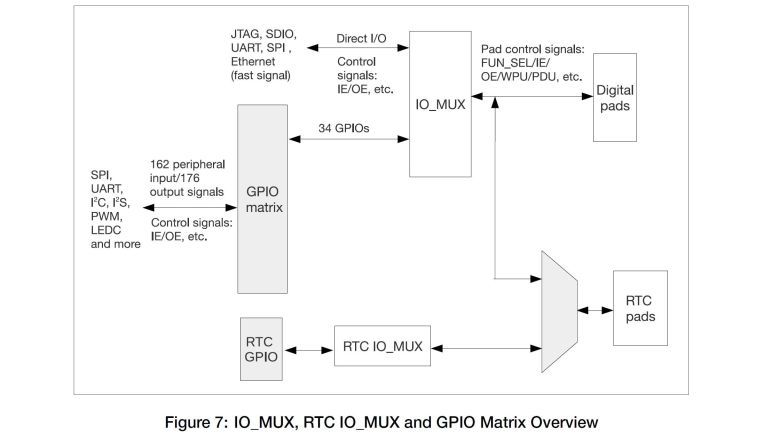

Anyway, this board has a blue LED wired to GPIO2, who still got routed to the same pin as the Espressif module. The LED is wired in parallel and should not interfere with using that pin as output. Though it might affect the signal if I use it as input. I spun up a FreeRTOS task purely for the task of blinking the LED at regular intervals, just to verify I could. My first effort put the ESP32 in an infinite reset loop, and I eventually figured out it was caused by insufficient memory allocated to task stack. I was very surprised that a simple LED blinker needed more than one kilobyte of stack, but it’s not the most important thing right now so I’ll look into it later.

After I successfully created a FreeRTOS task and see it running blinking the onboard blue LED, I proceeded to set up my first message queue. Queues are the simplest way for FreeRTOS tasks to pass data to each other. I copied code from my earlier ADC experiment to read position of an analog joystick, and queued the joystick position message for retrieval by another task. First run of the reader task simply dequeued the joystick position and printed to serial terminal, but that was enough to verify I had the queue running correctly.

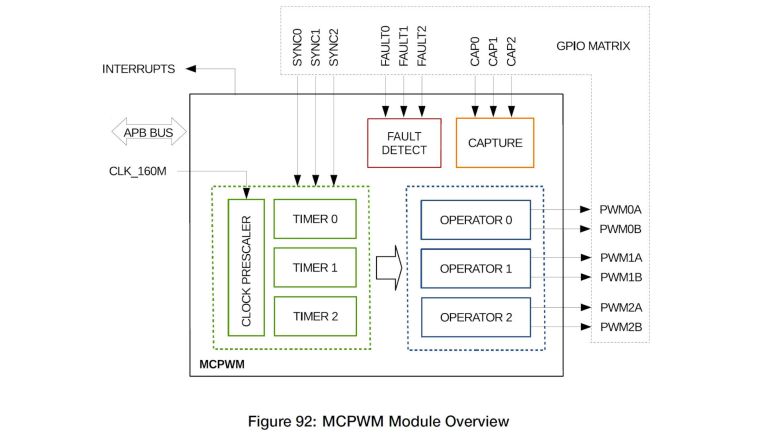

With those basic pieces established, I then wrote two more tasks. One reads the joystick position and puts motor control commands into yet another queue, and finally a task that reads motor control commands and adjusts MCPWM control signal for the L298N motor driver accordingly.

This exercise was a good test run to verify I could get the advantages I hoped to get by adopting FreeRTOS.

- By writing individual subareas as tasks, I could test them individually. In this case, I could smoke test the ADC task by writing another task to read the data it queued.

- Each task could be in charge of its own ESP32 configuration. ADC configuration is handled by the joystick reading task, separate from the other tasks. And likewise MCPWM configuration is handled by the L298N output task.

- Tasks are run independently from each other, and more importantly, can be modified independently. I found the blue LED was obnoxiously bright and went into my LED blinking task to reduce the on time to a brief flash and extend the time between flashes, and doing so did not affect timing of other tasks. Reading the joystick and sending motor control signals do not necessarily have to run in sync, I could update motor speed more often than reading the joystick, which would be useful if I wanted to add motor acceleration logic.

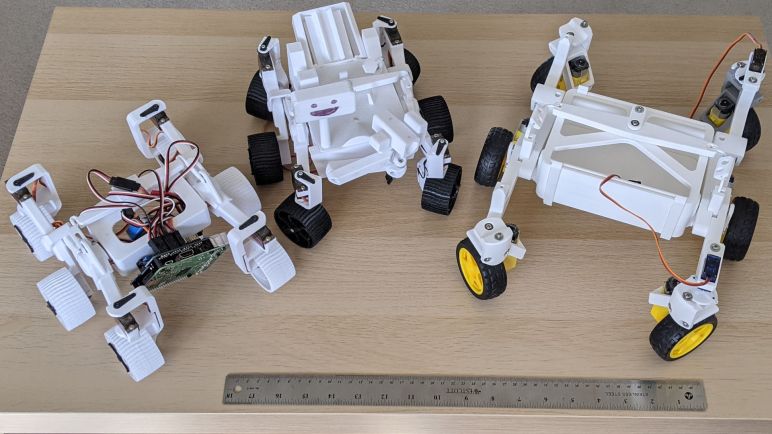

That last item is especially important. If I learn enough to design my interfaces (FreeRTOS queues) right, I could swap out FreeRTOS tasks without worrying that I would interfere with other tasks. I want this design to scale from micro Sawppy to regular size Sawppy V2 by swapping out different modules written for different motor controllers. By similar token, it should be easier to do quick hacks like swap out a single steering motor as SGVHAK rover had to do. I also want different control input options, from simple wired joystick to web UI to ROS messages, each of which would be a different task module but they could all use the same message queue format to communicate with the rest of the rover.

Knowing how to set up FreeRTOS tasks and queues aren’t nearly the whole picture of using FreeRTOS, but it gave me a good introduction and built confidence for continuing forward. And as a side effect of this software project, I also made a valuable non-software discovery: cardboard backing for electronics prototypes.

[Source code for this project is publicly available on GitHub]

(*) Disclosure: As an Amazon Associate I earn from qualifying purchases.